Together AI MCP Server

Generate code, evaluate embeddings, and deploy open-source LLMs instantly from your local agent via Together AI's infrastructure.

Vinkius AI Gateway supports streamable HTTP and SSE.

Works with every AI agent you already use

…and any MCP-compatible client

Together AI MCP Server: see your AI Agent in action

Built-in capabilities (7)

chat_completion

Provide a model ID and a JSON array of messages. Executes a chat completion using Together AI models

create_finetune_job

Provide a base model ID and a training file ID. Creates a new fine-tuning job

generate_embeddings

Provide a model ID and a JSON array of strings. Generates vector embeddings for input texts

generate_image

Provide a model ID and descriptive prompt. Generates an image from a text prompt

list_available_models

Lists all AI models available on Together AI

list_finetune_jobs

Lists all fine-tuning jobs

text_completion

Provide a model ID and a prompt. Executes a base text completion

What this connector unlocks

Connect your Together AI account to any AI agent and integrate bleeding-edge open-source models seamlessly into your workflow. Harness world-class inference speeds to query Llama, Mixtral, and more, or orchestrate specialized model fine-tuning jobs straight from your chat environment.

What you can do

- Model Discovery — Explore and list all currently supported models on the Together network, identifying the best engine for any NLP or vision task

- Conversational AI — Run chat completion cycles on advanced models simply by supplying a model ID directly from the chat prompt

- Vector Storage Preparation — Generate instant rich embeddings for input texts, ready to populate your analytical databases

- Creative Media — Instruct external diffusion models to generate images using detailed physical descriptions

- Custom Fine-Tuning — Provision custom training runs by indicating a base framework and dataset file, alongside tracking existing job statuses

How it works

1. Sign up for this integration

2. Open your api.together.xyz control panel and fetch a developer API Key

3. Plug the key above, specify models to your agent, and enjoy sub-second serverless inference directly inside your command interface

Who is this for?

- AI Developers — Orchestrate fine-tuning parameters and launch jobs to the compute cluster without CLI switching

- Software Engineers — Use the provider to test completions using alternative open-source solutions (e.g., Llama 3) natively in code editors

- Machine Learning Engineers — Bulk-generate vectors from raw logs using embedding models attached straight to their main conversational agent

Frequently asked questions

Give your AI agents the power of Together AI

Access Together AI and 2,000+ MCP servers — ready for your agents to use, right now. No glue code. No custom integrations. Just plug Vinkius AI Gateway and let your agents work.

More in this category

ClickHouse (Vector Search)

7 toolsManage vector embeddings and SQL via ClickHouse — list databases, execute SQL, and perform high-speed vector searches directly from any AI agent.

Weights & Biases

6 toolsTrack experiments, monitor ML runs, and manage artifacts on WandB — the developer platform for AI.

Redis Vector

6 toolsEquip your AI to autonomously manage embeddings, run KNN similarity searches, and administrate vector indexes natively inside your Redis stack.

You might also like

DealHub CPQ

10 toolsManage CPQ and sales via DealHub — create quotes, track opportunity stages, manage users, and sync CRM data directly from any AI agent.

Denim

10 toolsEquip your AI agent to manage marketing campaigns, track contacts, and monitor analytics via the Denim API.

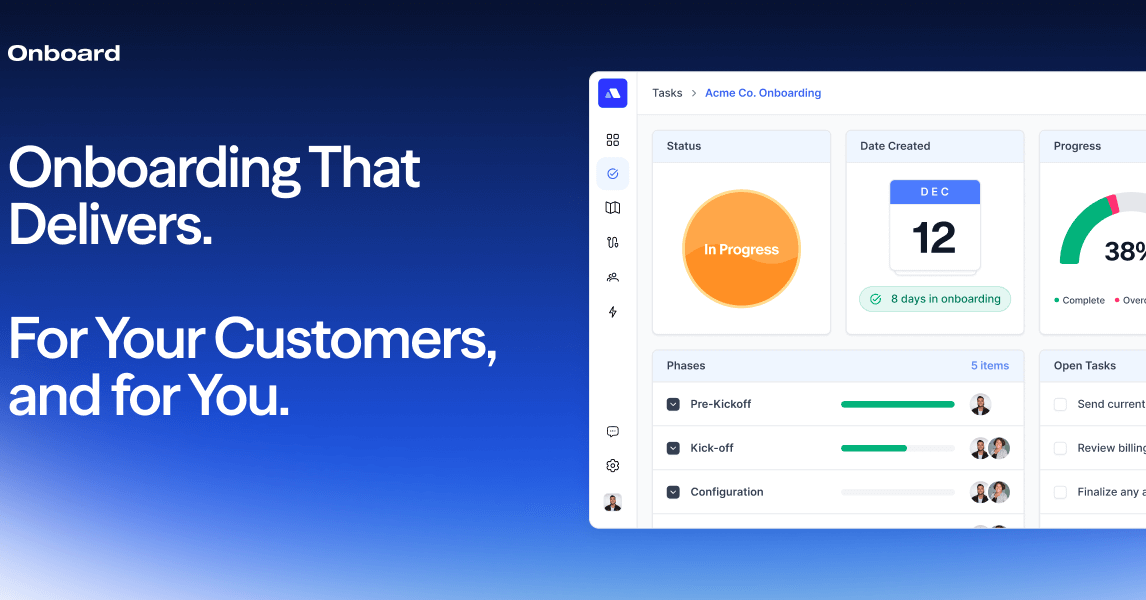

Onboard.io Implementation

10 toolsAutomate and manage customer onboarding via Onboard.io — track launch plans, tasks, and progress directly from your AI agent.