Memory & Cognition Layer for AI Agents

Pinecone. Mem0. Qdrant. Weaviate. LlamaIndex. Long-term memory, semantic search, and contextual awareness — connected, governed, and production-ready.

Ask AI about these MCP Servers

Pinecone

The #1 managed vector database — sub-10ms queries at billion-vector scale.

pinecone.ioPinecone is the industry standard for production vector search. This MCP Server connects your agent to serverless vector indexes with sub-10ms query latency, hybrid sparse-dense retrieval, and built-in metadata filtering. From semantic search to real-time RAG grounding — your agent gets instant access to billions of embeddings without managing a single shard.

Mem0

The memory layer for AI agents — persistent recall across every session.

mem0.aiLLMs forget everything between sessions. Mem0 fixes that. This MCP Server gives your agent persistent, intelligent memory — it automatically extracts facts, preferences, and context from conversations and stores them across user, session, and agent scopes. Your agent remembers who it's talking to, what they care about, and what happened last time. No prompt stuffing, no token waste.

Qdrant

High-performance Rust-built vector engine — 97% memory reduction with binary quantization.

qdrant.techBuilt in Rust for raw speed, Qdrant is the vector database engineers choose when milliseconds matter. This MCP Server gives your agent access to HNSW-powered similarity search with advanced quantization, payload-based filtering, and multi-vector indexing. Qdrant reduces memory usage by up to 97% with binary quantization while maintaining search quality — critical for agents operating at enterprise scale.

Weaviate

AI-native vector database — hybrid BM25 + vector search in a single query.

weaviate.ioWeaviate combines vector and keyword search in a single query — and that matters more than benchmarks. This MCP Server gives your agent hybrid retrieval that blends BM25 keyword matching with dense vector similarity, built-in vectorization modules for text and images, and GraphQL-powered exploration. When your agent needs to find documents that match both meaning and exact terms, Weaviate delivers.

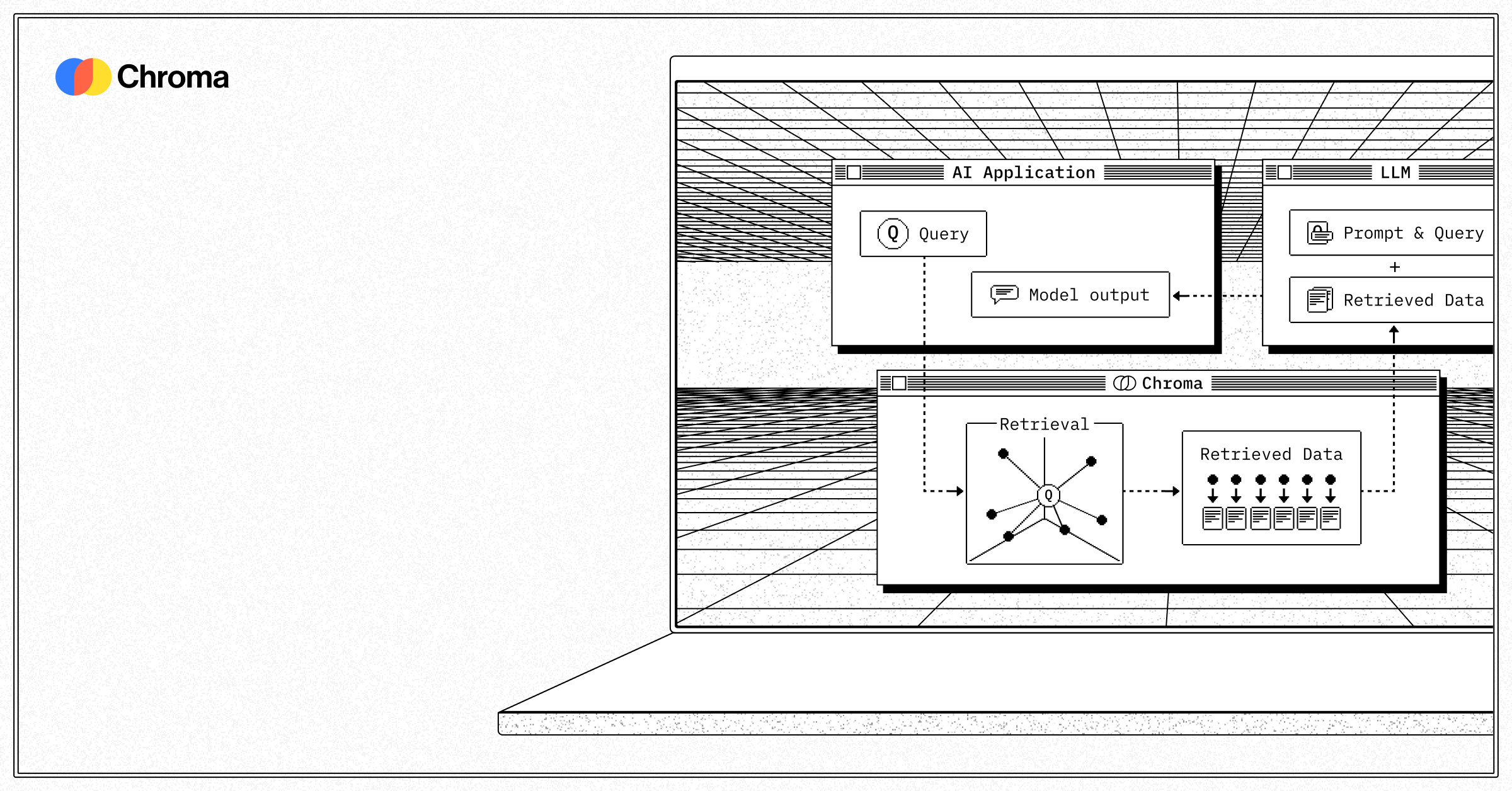

LlamaIndex

The #1 framework for RAG applications — ingest, index, and query any data source.

llamaindex.aiLlamaIndex is the connective tissue between your data and your LLM. This MCP Server lets your agent ingest documents from any source — PDFs, APIs, databases, wikis — and query them with structured or semantic retrieval. It handles chunking, embedding, indexing, and query planning so your agent doesn't have to. If you're building RAG, LlamaIndex is where you start.

Vector Search. RAG. Memory. Context. Ready for AI Agents.

Stop rebuilding RAG pipelines from scratch. The Vinkius AI Gateway gives you maximum security, full GDPR compliance, and built-in governance. Your agent's memory stays protected — always.