AI Frontier

96 apps

Databricks

8 outilsManage lakehouse via Databricks — monitor compute clusters, track job executions, audit SQL warehouses, and explore Unity Catalog directly from any AI agent.

OpenAI

10 outilsUse GPT-4o, DALL-E 3, embeddings, fine-tuning, and moderation as tools inside your AI agent workflows.

Azure AI Search

6 outilsExecute RAG queries against Azure AI Search natively — search vectors, full-text documents, and audit cloud indexes directly from your AI agent.

Azure Cognitive Search

7 outilsEmpower your AI with enterprise retrieval — run full-text search, semantic queries, and inspect cognitive skillsets on your Azure indexes.

Amazon Bedrock KB

6 outilsConnect your AI agent to AWS Bedrock Knowledge Bases — execute semantic searches, managed RAG, and sync vector datasources natively.

ClickHouse (Vector Search)

7 outilsManage vector embeddings and SQL via ClickHouse — list databases, execute SQL, and perform high-speed vector searches directly from any AI agent.

Cohere (Embed & Rerank)

6 outilsEmpower RAG via Cohere — generate high-quality text embeddings, rerank documents for better accuracy, and perform AI classification directly from any AI agent.

Cohere (AI Platform)

7 outilsPower enterprise AI via Cohere — generate text, perform chat completions, reorder documents, and manage embeddings directly from any AI agent.

Datadog AI (LLM Observability)

10 outilsMonitor LLM performance via Datadog — track token usage, audit prompts, and monitor AI model metrics directly from any AI agent.

Adobe Firefly

10 outilsGenerate images and vectors via Adobe Firefly — perform generative fill and expand, create text effects, and remove backgrounds directly from any AI agent.

Groq

8 outilsEmpower LLM applications via Groq — perform ultra-fast LPU-accelerated chat completions, handle audio transcription and translation, and use JSON mode directly from any AI agent.

Jina AI (Search Foundation & LLM Grounding)

6 outilsPower your RAG and search via Jina AI — generate embeddings, rerank documents, read URLs, and perform semantic web search.

LangGraph Cloud (Stateful AI Agents)

10 outilsOrchestrate stateful AI agents via LangGraph Cloud — manage assistants, monitor conversation threads, and handle human-in-the-loop overrides.

LlamaIndex (AI Data Framework & RAG)

6 outilsQuery and manage RAG pipelines via LlamaIndex — execute natural language searches, audit indexed files, and monitor data pipelines.

Mem0

4 outilsGive your AI agent persistent memory — store, search, and recall facts, preferences, and context across sessions using the leading agent memory platform.

Midjourney AI (Generative Image Arts)

10 outilsGenerate professional AI art via Midjourney — use 'imagine' for text-to-image, upscale grids, and perform camera edits.

Mistral AI (Frontier LLMs & Embeddings)

7 outilsManage AI inference via Mistral — execute chat completions, generate RAG embeddings, and audit frontier models.

New Relic AI (LLM Observability)

10 outilsMonitor and audit LLM telemetry via New Relic AI — track token costs, p95 latency, and user feedback.

NVIDIA AI

9 outilsAccess LLMs, embeddings, code generation, and reasoning via NVIDIA API Catalog.

NVIDIA Vision

9 outilsGenerate images, analyze visuals, detect objects, and caption images via NVIDIA Vision APIs.

Perplexity AI

14 outilsQuery Perplexity AI for real-time web search with citations — ask questions, deep research, reasoning, and structured answers directly from any AI agent.

Pinecone

7 outilsEquip your AI agent to manage your Pinecone vector databases. Query embeddings, fetch metrics, manage collections, and run stats natively via chat.

AI21 Studio

7 outilsUnlock AI21's Jamba models and language tools for summarizing, paraphrasing, and grammar correction natively.

Anyscale

7 outilsOrchestrate your Anyscale infrastructure — manage LLM queries, vectors, services, and cluster batch jobs directly from your AI agent.

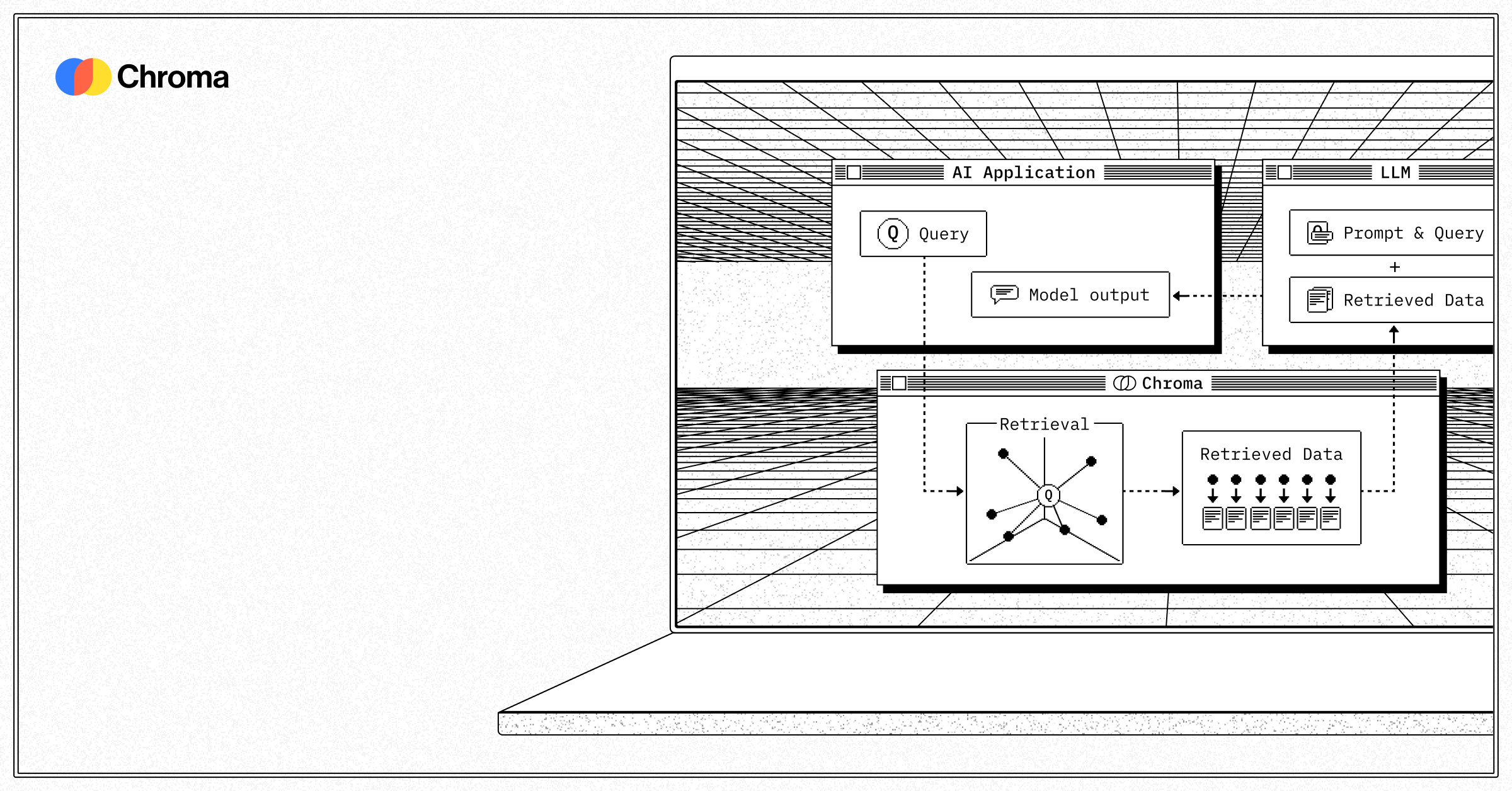

Chroma (Vector DB)

7 outilsManage vector embeddings via Chroma — list collections, query embeddings, and audit document counts directly from any AI agent.

Deepgram

10 outilsPower audio AI via Deepgram — perform high-speed speech-to-text, generate lifelike text-to-speech, track usage, and manage API keys directly from any AI agent.

Dify

6 outilsManage agentic workflows via Dify — send chat messages, track conversations, audit app parameters, and handle file uploads directly from any AI agent.

ElevenLabs

10 outilsGenerate high-quality AI speech via ElevenLabs — use lifelike voices, manage text-to-speech, track usage, and handle audio dubbing directly from any AI agent.

Exa

3 outilsSemantic search engine built for AI — find conceptually relevant web content, not just keyword matches. Powered by neural search technology.

Fireworks AI

6 outilsEmpower LLM applications via Fireworks AI — perform ultra-fast chat completions, generate embeddings and images, and transcribe audio directly from any AI agent.

Helicone (LLM Observability)

10 outilsMonitor LLM usage via Helicone — track requests, analyze costs, measure latency, and manage prompts.

Ideogram (AI Image Generation)

10 outilsGenerate and edit images via Ideogram — the industry leader for rendering text within AI-generated visuals.

LanceDB (Serverless Vector DB)

6 outilsManage vectorized data via LanceDB — perform similarity searches, create tables, and manage multi-modal embeddings.

LiteLLM (LLM Proxy & Spend Tracking)

10 outilsManage your LLM gateway via LiteLLM — generate API keys, track spending, and orchestrate model fallback paths.

LlamaCloud (Managed RAG & Parsing)

6 outilsManage RAG pipelines and document parsing via LlamaCloud — orchestrate LlamaParse jobs and audit data ingestion.

Luma AI (Generative Video & Creative)

10 outilsGenerate cinematic AI videos and images via Luma — use Dream Machine for text-to-video, image-to-video, and professional camera control.

NVIDIA Audio

10 outilsTranscribe speech, generate voices, translate audio, and clone voices via NVIDIA Audio APIs.

NVIDIA API Catalog

8 outilsCloud Engine proxy running native foundational completions natively utilizing active Nemotron and Llama3 architectures.