LangSmith (LLM Observability & Hub) MCP Server

Monitor LLM apps via LangSmith — track traces, audit prompt templates, and manage evaluation datasets.

Vinkius AI Gateway suporta streamable HTTP e SSE.

Funciona com todos os agentes de IA que você já usa

…e qualquer cliente compatível com MCP

LangSmith MCP Server: veja o seu AI Agent em ação

Capacidades integradas (6)

get_run

Get precise telemetry for a single LLM invocation run

list_annotation_queues

List active human-in-the-loop annotation queues

list_datasets

List all evaluation and fine-tuning datasets mapped in LangSmith

list_projects

Maps out the boundaries of distinct AI pipelines currently monitored by LangSmith. List all active LangSmith tracing projects/sessions

list_prompts

Extract prompt templates hosted in the LangChain Hub

list_runs

Isolates the raw interactions containing prompts sent to and responses received from the AI models. List explicit LLM invocation runs within a specific project

O que esse conector desbloqueia

Connect your LangSmith account to any AI agent and take full control of your LLM observability, tracing, and prompt management through natural conversation.

What you can do

- Trace Orchestration — List active tracing projects and retrieve detailed execution logs for specific LLM invocation runs directly from your agent

- Performance Telemetry — Extract precise metrics including token consumption, prompt latency, and exact error strings from your AI pipelines

- Prompt Hub Access — Navigate and retrieve managed prompt templates, variable definitions, and version histories hosted in the LangChain Hub

- Evaluation Datasets — Enumerate curated 'golden' datasets used for automated evaluation of prompt logic or few-shot injection models

- Human-in-the-Loop Audit — Monitor active annotation queues where human reviewers assess the alignment, accuracy, and safety of generated LLM traces

- Agentic Step Analysis — Deep-dive into multi-turn agentic workflows to understand nested tool calls and internal reasoning paths securely

How it works

1. Subscribe to this server

2. Enter your LangSmith API Key and Endpoint

3. Start monitoring your LLM infrastructure from Claude, Cursor, or any MCP-compatible client

Who is this for?

- LLM Engineers — debug complex agentic traces and measure prompt performance through natural conversation without manual UI filtering

- AI Developers — retrieve the latest prompt templates from the Hub and verify evaluation dataset structures directly from your workspace

- AI Analysts — audit human feedback queues and report on overall model grounding and accuracy across multiple tracing projects

Perguntas frequentes

Dê aos seus agentes de IA o poder do LangSmith

Acesse o LangSmith e mais de 2.000 servidores MCP — prontos para seus agentes usarem, agora mesmo. Sem código cola. Sem integrações customizadas. Apenas plugue o Vinkius AI Gateway e deixe seus agentes trabalharem.

Mais nesta categoria

.png)

E2B

3 ferramentasSecure cloud sandboxes for AI code execution — run Python, JavaScript, and shell commands in isolated Firecracker microVMs with ~150ms cold start.

NVIDIA NIM

8 ferramentasMLOps proxy unifying explicitly local hardware limits extracting telemetry across active NVIDIA AI containers.

Groq

8 ferramentasEmpower LLM applications via Groq — perform ultra-fast LPU-accelerated chat completions, handle audio transcription and translation, and use JSON mode directly from any AI agent.

Você também pode gostar

NASA Media & Patents — Images, Videos & Technology Transfer

4 ferramentasSearch NASA's library of 140,000+ images and videos from every mission: Apollo, ISS, Hubble, Webb, Mars rovers, and more — plus browse NASA's technology transfer portfolio of patents and commercial spinoffs available for licensing.

Amplitude

10 ferramentasAnalyze product data via Amplitude — get user activity, calculate retention, analyze funnels, and track revenue directly from any AI agent.

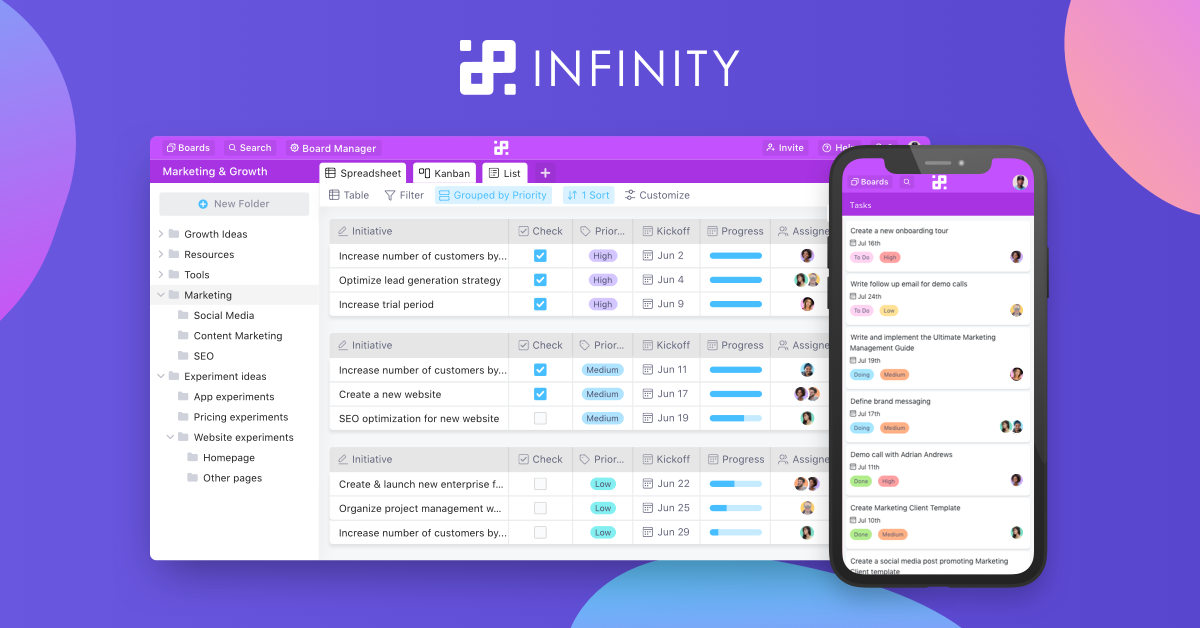

Infinity Work Manager

15 ferramentasManage work items, boards, comments, and folders via Infinity API.