Bring Async Check Ins

to Vercel AI SDK

Learn how to connect Range to Vercel AI SDK and start using 11 AI agent tools in minutes. Fully managed, enterprise secure, and ready to use without writing a single line of code.

What is the Range MCP Server?

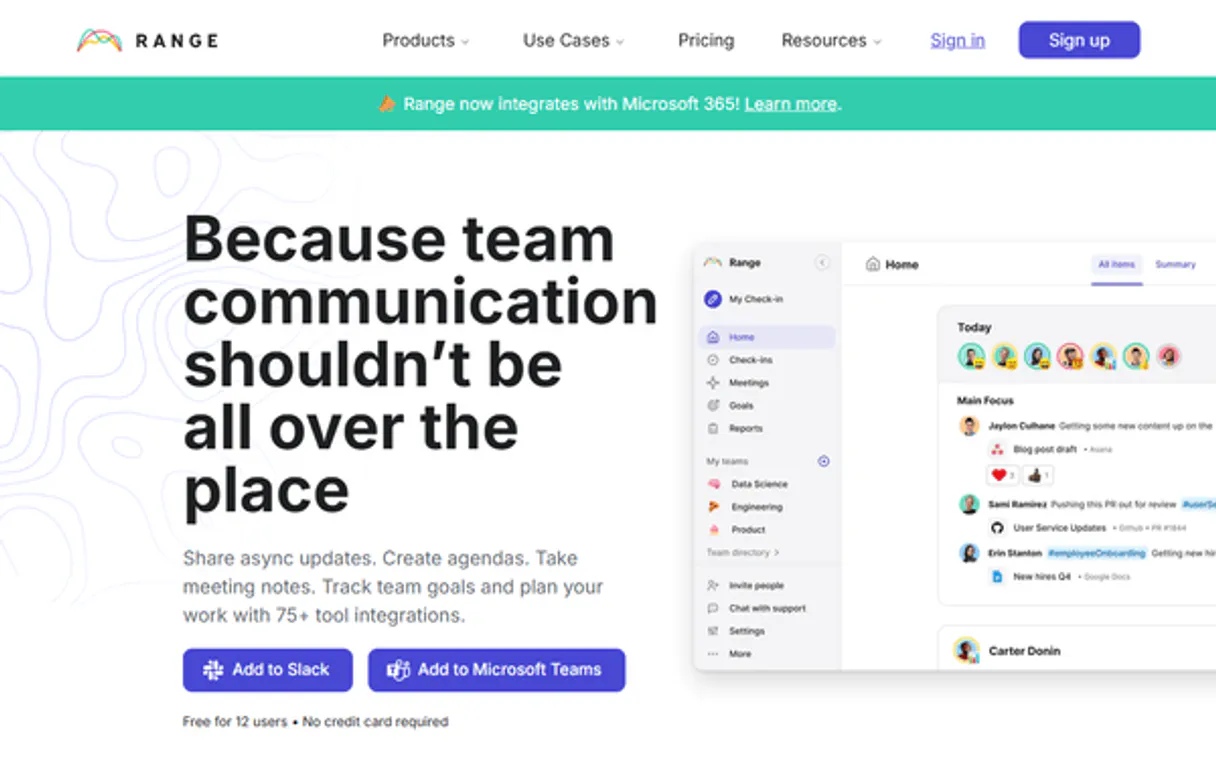

Connect your Range.co account to any AI agent and take full control of your team communication and check-in orchestration through natural conversation. Range provides a premier platform for keeping remote and hybrid teams synchronized, and this integration allows you to retrieve team metadata, monitor check-in updates (snippets), and track organizational objectives directly from your chat interface.

What you can do

- Check-in & Update Orchestration — List all managed updates and retrieve detailed metadata including snippet content programmatically.

- Team & User Lifecycle Management — Access and monitor your workspace teams and retrieve detailed user profile metadata directly from the AI interface.

- Objective & Goal Intelligence — Access organizational objectives to maintain a clear overview of team alignment and progress via natural language.

- Activity & Snippet Control — Retrieve specific snippets and check-in details to stay informed about daily team accomplishments.

- Operational Monitoring — Track system activity and manage workspace metadata using simple AI commands to ensure your team remains high-performing.

How it works

1. Subscribe to this server

2. Enter your Range API Key from your developer settings

3. Start managing your team check-ins from Claude, Cursor, or any MCP-compatible client

No more manual check-in reading or missed status updates. Your AI acts as a dedicated operations manager or team coordinator.

Who is this for?

- Engineering Managers — quickly retrieve daily updates and monitor team progress without switching apps.

- Product Leads — automate the retrieval of objective statuses and track feature delivery via natural conversation.

- Operations Teams — streamline the retrieval of user metadata and monitor organizational health directly within the chat.

Built-in capabilities (11)

Post a new standup update

Get details for a specific objective

Get details of a specific check-in snippet

Get details for a specific team

Get details of a specific update (check-in)

Get details for a specific team member

List all team goals

List team objectives

List all teams

Can be filtered by target_id or for_user_id. List team check-ins (updates)

List all users in the organization

Why Vercel AI SDK?

The Vercel AI SDK gives every Range tool full TypeScript type inference, IDE autocomplete, and compile-time error checking. Connect 11 tools through Vinkius and stream results progressively to React, Svelte, or Vue components. works on Edge Functions, Cloudflare Workers, and any Node.js runtime.

- —

TypeScript-first: every MCP tool gets full type inference, IDE autocomplete, and compile-time error checking out of the box

- —

Framework-agnostic core works with Next.js, Nuxt, SvelteKit, or any Node.js runtime. same Range integration everywhere

- —

Built-in streaming UI primitives let you display Range tool results progressively in React, Svelte, or Vue components

- —

Edge-compatible: the AI SDK runs on Vercel Edge Functions, Cloudflare Workers, and other edge runtimes for minimal latency

Range in Vercel AI SDK

Range and 3,400+ other MCP servers. One platform. One governance layer.

Teams that connect Range to Vercel AI SDK through Vinkius don't need to source, host, or maintain individual MCP servers. Every tool call runs inside a hardened runtime with credential isolation, DLP, and a signed audit chain.

Raw MCP | Vinkius | |

|---|---|---|

| Server catalog | Find and host yourself | 3,400+ managed |

| Infrastructure | Self-hosted | Sandboxed V8 isolates |

| Credential handling | Plaintext in config | Vault + runtime injection |

| Data loss prevention | None | Configurable DLP policies |

| Kill switch | None | Global instant shutdown |

| Financial circuit breakers | None | Per-server limits + alerts |

| Audit trail | None | Ed25519 signed logs |

| SIEM log streaming | None | Splunk, Datadog, Webhook |

| Honeytokens | None | Canary alerts on leak |

| Custom domains | Not applicable | DNS challenge verified |

| GDPR compliance | Manual effort | Automated purge + export |

Why teams choose Vinkius for Range in Vercel AI SDK

The Range MCP Server runs on Vinkius-managed infrastructure inside AWS — a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts. All 11 tools execute in hardened sandboxes optimized for native MCP execution.

Your AI agents in Vercel AI SDK only access the data you authorize, with DLP that blocks sensitive information from ever reaching the model, kill switch for instant shutdown, and up to 60% token savings. Enterprise-grade infrastructure, zero maintenance.

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

How Vinkius secures

Range for Vercel AI SDK

Every tool call from Vercel AI SDK to the Range MCP Server is protected by DLP redaction, cryptographic audit chains, V8 sandbox isolation, kill switch, and financial circuit breakers.

Frequently asked questions

Can my AI automatically find the recent check-ins for a specific user?

Yes! Use the list_updates tool with the for_user_id parameter. Your agent will respond with the most recent check-in snippets and status updates in seconds.

How do I find my Range API Key?

Log in to Range, go to Settings > Developer Settings > API Keys, and generate a new key for your account.

Can I see team objectives via the AI?

Yes, use the list_objectives tool to retrieve all active goals and targets configured in your Range workspace.

How does the Vercel AI SDK connect to MCP servers?

Import createMCPClient from @ai-sdk/mcp and pass the server URL. The SDK discovers all tools and provides typed TypeScript interfaces for each one.

Can I use MCP tools in Edge Functions?

Yes. The AI SDK is fully edge-compatible. MCP connections work on Vercel Edge Functions, Cloudflare Workers, and similar runtimes.

Does it support streaming tool results?

Yes. The SDK provides streaming primitives like useChat and streamText that handle tool calls and display results progressively in the UI.

createMCPClient is not a function

Install: npm install @ai-sdk/mcp