Chainlit MCP. Audit AI chat history and model performance

Works with every AI agent you already use

…and any MCP-compatible client

Just plug in your AI agents and start using Vinkius.

Chainlit MCP Server lets your AI agent audit entire chat threads and monitor AI performance. It fetches global traffic stats, tracks user feedback (thumbs up/down), and maps internal model steps.

Diagnose model failures and analyze conversational patterns by querying project scope, thread history, and usage metrics.

What your AI agents can do

Get stats

Retrieves explicit analytics statistics covering traffic boundaries and resource usage for your projects.

Get thread

Retrieves the full data payload for a specific conversational thread, showing its node structure.

List feedbacks

Lists user reviews, tracking how users rate the conversational accuracy and value of your app.

Use get_stats to fetch global usage data, including traffic volumes and resource consumption across all configured projects.

Use get_thread to fetch the complete payload for a specific conversational thread, mapping its exact interaction nodes.

Use list_feedbacks to pull a list of user reviews, tracking sentiment and accuracy ratings across deployments.

Use list_projects to retrieve a list of all globally configured Chainlit Cloud projects managed by your account.

Use list_steps to list the raw programmatic interaction steps, showing prompts and outputs within a single thread.

Use list_threads to list all conversational threads, helping you pinpoint user interactions within a specific deployed project.

Ask AI about this MCP

Supported MCP Clients

Waiting for input…

Chainlit MCP Server: 6 Tools for AI Observability

These tools let your AI agent pull deep data on chat history, project stats, and user feedback directly from your Chainlit Cloud environment.

019d756bget stats

Retrieves explicit analytics statistics covering traffic boundaries and resource usage for your projects.

019d756bget thread

Retrieves the full data payload for a specific conversational thread, showing its node structure.

019d756blist feedbacks

Lists user reviews, tracking how users rate the conversational accuracy and value of your app.

019d756blist projects

Lists all globally configured Chainlit Cloud projects, acting as independent app tracking spaces.

019d756blist steps

Lists the raw programmatic steps, detailing the prompts and outputs within a single interaction thread.

019d756blist threads

Lists all conversational threads, pinpointing user interactions within a specific deployed project.

Choose How to Get Started

Build a custom MCP for your own tools, or connect a ready-made integration from our catalog.

Build Your Own

Turn any API into an MCP. Import a spec, define Agent Skills, or deploy with MCPFusion.

- Import from OpenAPI, Swagger, or YAML specs

- Create Agent Skills with progressive disclosure

- Deploy to edge with MCPFusion framework

- Built in DLP, auth, and compliance on every call

- Real time usage dashboard and cost metering

- Publish to catalog or keep private

Make Your AI Do More

Start with Chainlit, then connect any of our 4,700+ other servers whenever your AI needs more. One click, no limits.

- Use this MCP plus 4,700+ others, all in one place

- Add new capabilities to your AI anytime you want

- Every connection is secured and compliant automatically

- Track usage and costs across all your servers

- Works with Claude, ChatGPT, Cursor, and more

- New servers added to the catalog every week

What you can do with this MCP connector

Your AI client uses the get_stats tool to pull global usage data, showing traffic volumes and resource consumption across all your configured projects. It can grab a list of all globally set up Chainlit Cloud projects using list_projects. To find specific user interactions, your agent runs list_threads, giving you a list of every conversational thread within a deployed project.

For a full conversation, the agent uses get_thread to pull the complete payload for a specific thread, mapping out every interaction node. You can check user sentiment and accuracy ratings by running list_feedbacks, which pulls a list of user reviews. If you need to see the raw logic inside a single chat, list_steps lists the programmatic interaction steps, detailing the prompts and outputs for every turn.

Finally, the agent can get explicit analytics statistics covering traffic boundaries and resource usage with get_stats.

How Chainlit MCP Works

- 1 Subscribe to this server and provide your Chainlit Cloud URL and Project API Key.

- 2 Your AI client executes a tool call (e.g.,

list_threads) against the server's API endpoint. - 3 The server processes the request, fetches the raw data from Chainlit Cloud, and returns the structured results directly to your agent for analysis.

The bottom line is, your agent connects to Chainlit Cloud and pulls deep, structured data about your AI app's performance and conversations.

Who Is Chainlit MCP For?

This is for AI developers and product managers who need to move past simple log viewing. If you're tired of guessing why your bot failed in production, or if you need to prove the value of your AI feature with hard data, this is for you. It lets you diagnose failures and track user satisfaction without touching the actual cloud console.

Uses list_steps and get_thread to diagnose exactly why a model failed, identifying the precise logical sequence and parameters used for a bad output.

Runs list_feedbacks to monitor the total positive versus negative outcomes, and uses get_stats to summarize the worst user chats automatically.

Polls the server using list_threads to evaluate new conversations' tone, relevance, and compliance across hundreds of hours of data without reading logs manually.

What Changes When You Connect

- See project-wide performance using

get_stats. You get metrics on total traffic, unique users, and resource consumption across your entire AI portfolio. - Pinpoint the exact failure point with

list_stepsandget_thread. You map the precise sequence of prompts, outputs, and tool calls that led to a bad response. - Monitor user sentiment using

list_feedbacks. You automatically gather lists of thumbs up/down and text reviews, giving you a quantifiable measure of user satisfaction. - Scope out your environment with

list_projects. You quickly see every independent app tracking space you run, making it easy to target your diagnostics. - Track conversation flow with

list_threads. You find all raw user interactions in a specific project, allowing you to sample conversations for QA without manual log diving. - Identify the root cause of an error. You combine

list_threadsandlist_stepsto trace a user's journey and the specific logic failure that occurred.

Real-World Use Cases

Debugging a Production Bug

A developer sees a user report a weird output. Instead of guessing, they ask their agent to run list_steps and get_thread on the specific interaction. This immediately shows the exact logical sequence and parameter stack that caused the failure, letting them fix the model.

Measuring Bot Value

A product team needs to show stakeholders the bot's effectiveness. They use list_feedbacks to gather all negative signals and get_stats to calculate total engagement. They can then prompt the agent to summarize the worst chats, proving where the bot needs work.

QA Compliance Sweep

A QA specialist needs to check for compliance across hundreds of chats. They run list_threads for a time window and then use list_steps on a sample. This allows them to evaluate tone and relevance across massive data volumes without reading a single log file.

Understanding Scope

A new engineer joins the team. They run list_projects first to see the full scope of AI deployments. Then they use get_stats to benchmark the performance of the most critical project, getting a clear picture of the whole system.

The Tradeoffs

Reading Logs Manually

A developer has to jump into the Chainlit Cloud UI, manually filter by date, search for a user ID, and scroll through thousands of lines of text to find the failure point. It's a time sink.

→

Let your agent run list_threads to find the relevant conversation ID. Then, feed that ID into get_thread and list_steps. This gives you the full, structured context instantly.

Assuming Tool Coverage

A team thinks they just need to check total counts, so they only run get_stats. They miss the underlying reason for the drop in usage (e.g., a specific tool failing).

→

Always cross-reference get_stats with list_feedbacks. If usage is down, check the negative sentiment data to find out why users are leaving.

Checking One Project at a Time

A PM only checks the main 'Alpha' project's dashboard and assumes everything is fine, missing performance issues in a secondary, critical 'Beta' deployment.

→

Start by calling list_projects to get a manifest of all deployed apps. Then, run get_stats on each project ID to ensure you have full visibility across your entire portfolio.

When It Fits, When It Doesn't

Use this if you need to diagnose why your AI app is performing a certain way, not just that it's performing. It's essential if you need to correlate usage metrics (get_stats) with user sentiment (list_feedbacks) or trace the exact failure path (list_steps). Don't use it if all you need is a simple API call or a static data pull; those jobs are for specialized data services. If you just need to see the list of projects, use list_projects. But if you need to know the performance of those projects, you need the whole stack.

Independent Platform Disclaimer: Vinkius is an independent platform and is not affiliated with, endorsed by, sponsored by, verified by, or otherwise authorized by Chainlit. All third-party trademarks, logos, and brand names are the property of their respective owners. Their use on this website is strictly for informational purposes to identify service compatibility and interoperability.

VINKIUS INFRASTRUCTURE

Cloud Hosted

Managed infra

V8 Isolated

Sandboxed per request

Zero-Trust Proxy

No stored credentials

DLP Enforced

Policy on every call

GDPR Compliant

EU data residency

Token Compression

~60% cost reduction

Works with Claude, ChatGPT, Cursor, and more

The Model Context Protocol standardizes how applications expose capabilities to LLMs. Instead of operating in isolation, your AI gains direct access to external platforms, live data, and real-world actions through secure, standardized connections.

This server provides 6 capabilities that interface natively with Claude, ChatGPT, Cursor, and any MCP client. No middleware. No custom integration required.

Available Capabilities

Debugging AI chat failures shouldn't feel like forensic accounting.

Today, figuring out why an AI model gave a bad answer means clicking through dashboards, manually filtering timestamps, and cross-referencing error codes across multiple tabs. It's slow, tedious, and you're always missing the full picture.

With the Chainlit MCP Server, your agent handles the plumbing. You ask it to diagnose a failure, and it runs the necessary checks, pulling the full conversation history (`get_thread`) and the precise logical steps (`list_steps`). You get the root cause, instantly.

Chainlit MCP Server: Full Visibility with `list_feedbacks`

Manually collecting user feedback means asking users to email a form, or you have to manually scrape widget data. You get fragmented, inconsistent, and rarely actionable data.

Now, your agent runs `list_feedbacks`. It pulls a structured list of all ratings and comments directly from the source. You get a clean, comprehensive view of user sentiment that you can act on immediately.

Common Questions About Chainlit MCP

How do I use the Chainlit MCP Server to find out how many users used my AI app? +

You use the get_stats tool. This function retrieves global usage metrics, giving you total traffic counts and distinct user adoption figures across your projects.

I need to see the full conversation history for a specific user. Which tool do I use on the Chainlit MCP Server? +

Use get_thread. This tool retrieves the full data payload for a specific conversation thread, mapping all the node topologies and interactions.

Can I find out if my model failed because of a bad prompt? Use the Chainlit MCP Server. +

Run list_steps. This tool lists the raw programmatic steps, showing the exact prompts and outputs used during an interaction, helping you trace logic flaws.

How do I see all the different AI apps I have running on Chainlit Cloud? +

You run list_projects. This lists every globally configured project, giving you a clear manifest of all your independent app tracking spaces.

What is the best way to check user satisfaction using the Chainlit MCP Server? +

Use list_feedbacks. This tool gathers user reviews and ratings, providing a structured view of conversational accuracy and value across all deployments.

How do I use the `list_steps` tool to diagnose why an AI interaction failed? +

The list_steps tool reveals the full execution path. It shows the exact sequence of prompts, tool calls, and model outputs used during a single interaction, letting you pinpoint where the logic broke.

What should I know about the data I get when I use the `get_stats` tool? +

The get_stats tool provides aggregate data on traffic and resource use. It returns counts for conversations and the total internal tokens consumed across your entire project.

If I need to check many conversations, how do I use the `list_threads` tool? +

The list_threads tool lists conversation threads within a project. It gives you the unique identifiers and boundaries for all user interactions, helping you select which ones to deep-dive into.

Will the AI agent be able to monitor the user interactions and evaluate chat history? +

Yes! The agent can dive into the list_threads and get_thread endpoints to retrieve comprehensive interaction logs from your deployed Chainlit apps. You can essentially command the agent to read past AI chats, summarize usage, or identify edge cases in the user input.

Can it track the individual thought steps and LLM prompt tokens consumed? +

Absolutely. Using the list_steps tool, your agent analyzes the programmatic trace—including specific LLM calls, function blocks, or retrieval events. Thus, identifying hallucinations or latency issues is as easy as typing a prompt.

Is it possible to extract and analyze human feedback scores instantly? +

Yes. The integration provides native capabilities via list_feedbacks to retrieve the explicit thumbs up, down, and textual comments your users left on specific messages, streamlining QA.

Use it with your favorite AI tools

Connect this server to Cursor, Claude, VS Code, and more.

More in this category

Flowise

Manage low-code AI workflows via Flowise — run predictions, track chatflows and agentflows, handle tools, and audit execution history directly from any AI agent.

Runlayer

AI enterprise control plane: manage MCP servers, skills, agents, and security policies via agents.

Browserbase

Cloud browser infrastructure for AI agents — create, control, and manage headless Chromium sessions via CDP for automated web interaction.

You might also like

Open-Meteo Marine Weather

Empower your AI with ocean intelligence: wave height, swell forecasts, ocean currents, tides, and sea surface temperature at 5km resolution — built for maritime professionals.

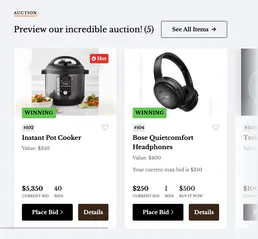

ClickBid

Manage auction events, bidders, items, and donations directly via AI.

Tenderly (Ethereum Dev Platform)

Simulate Ethereum transactions, create Virtual TestNets, and monitor on-chain events directly from your AI agent.