FlowiseAI MCP Server for CursorGive Cursor instant access to 12 tools to Execute Chatflow Prediction, Get Chatflow Details, Get Server Version, and more

Cursor is an AI-first code editor built on VS Code that integrates LLM-powered coding assistance directly into the development workflow. Its Agent mode enables autonomous multi-step coding tasks, and MCP support lets agents access external data sources and APIs during code generation.

Ask AI about this App Connector for Cursor

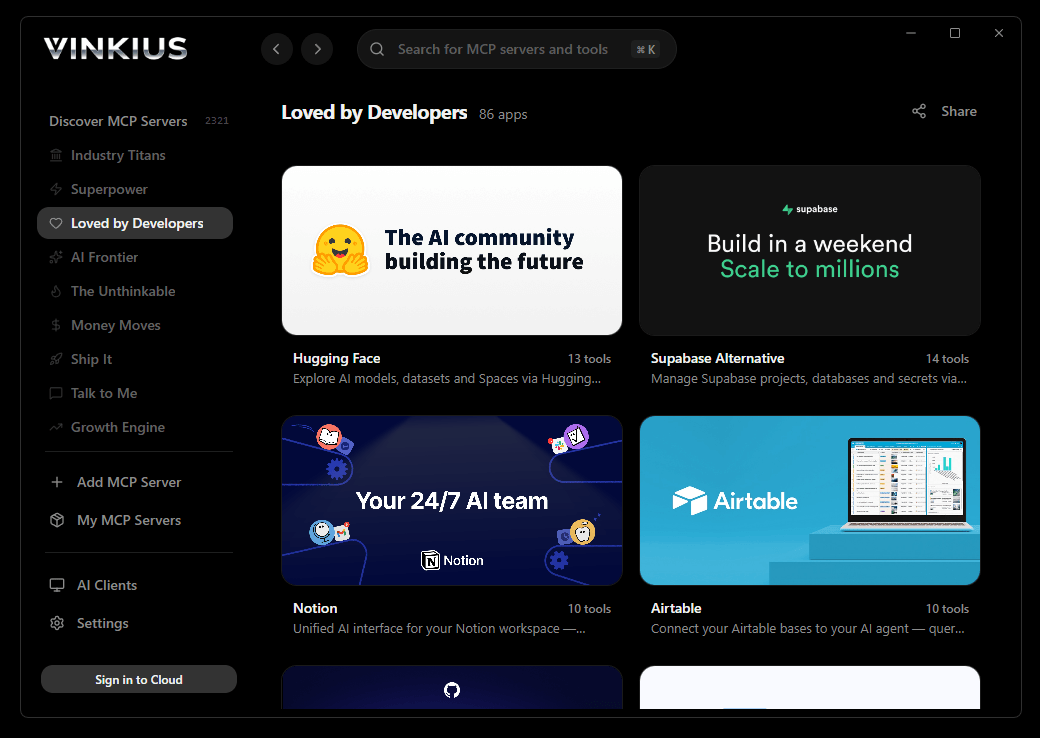

The FlowiseAI app connector for Cursor is a standout in the Friends Mcp category — giving your AI agent 12 tools to work with, ready to go from day one.

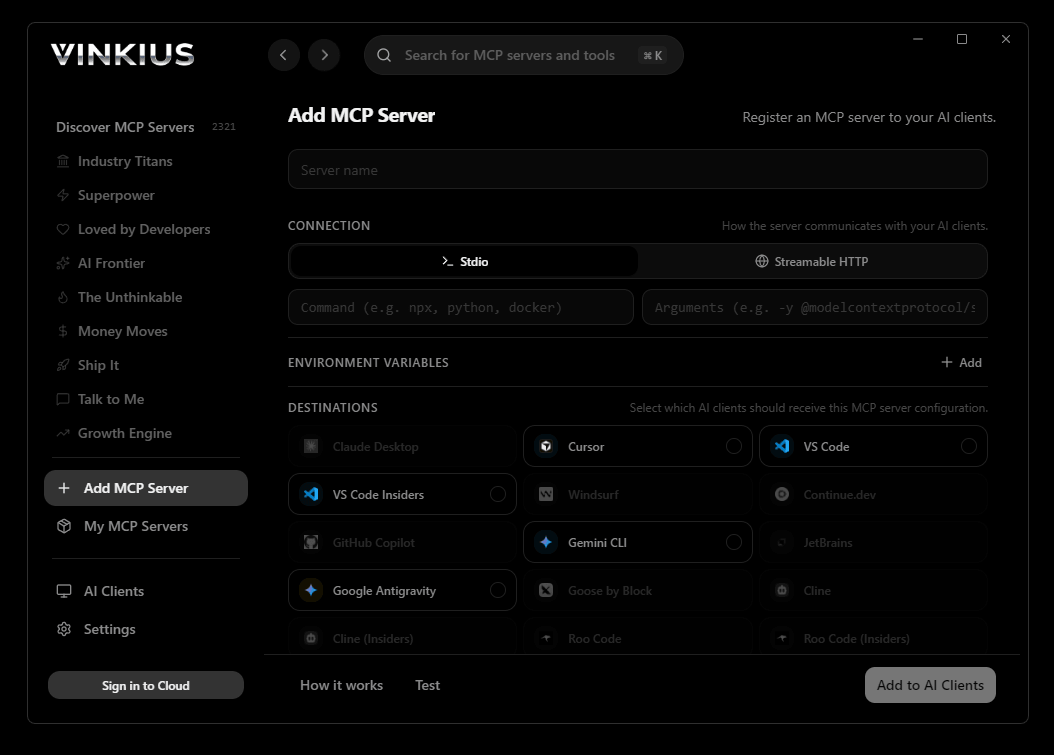

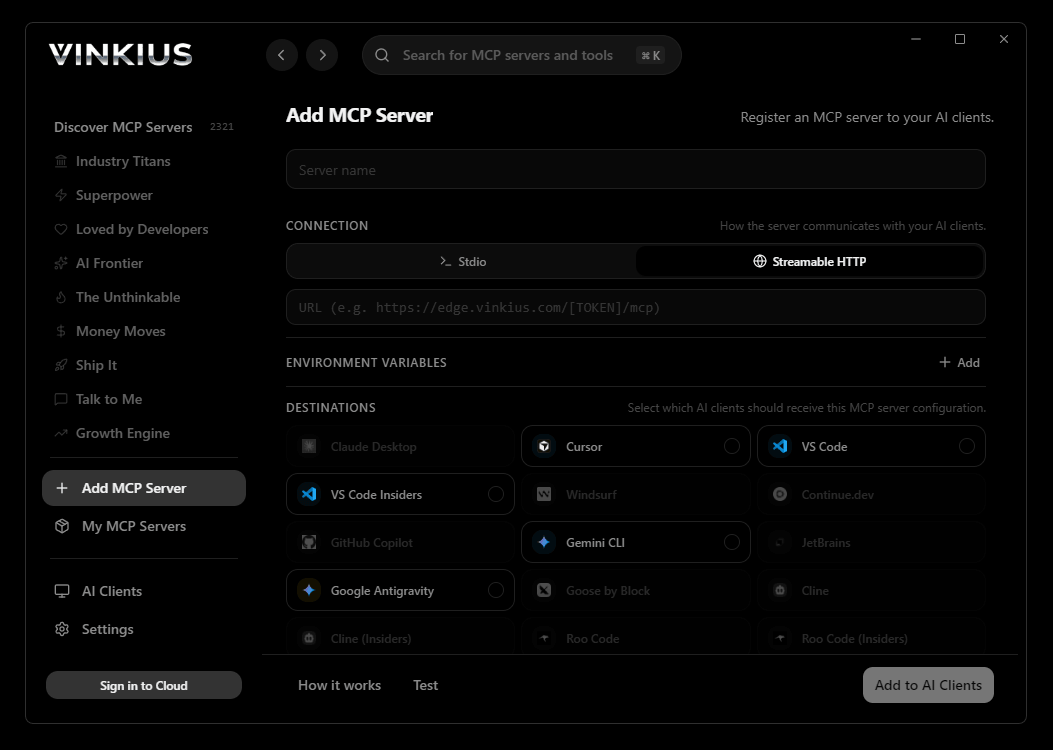

Vinkius delivers Streamable HTTP and SSE to any MCP client

Vinkius Desktop App

The modern way to manage MCP Servers — no config files, no terminal commands. Install FlowiseAI and 3,400+ MCP Servers from a single visual interface.

{

"mcpServers": {

"flowiseai": {

"url": "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp"

}

}

}

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About FlowiseAI MCP Server

Connect your FlowiseAI (self-hosted) instance to any AI agent and take full control of your LLM orchestration and RAG workflows through natural conversation.

Cursor's Agent mode turns FlowiseAI into an in-editor superpower. Ask Cursor to generate code using live data from FlowiseAI and it fetches, processes, and writes. all in a single agentic loop. 12 tools appear alongside file editing and terminal access, creating a unified development environment grounded in real-time information.

What you can do

- Prediction Orchestration — Trigger specific chatflows and retrieve LLM-generated responses programmatically using natural language inputs

- Chatflow Management — List all orchestration flows and retrieve detailed technical structures and metadata to monitor your AI agents

- Vector Intelligence — Programmatically upsert documents or raw data into the vector stores linked to your chatflows to ensure high-fidelity context

- Component Oversight — Access server-wide credentials, custom tools, and global variables to manage your complete Flowise ecosystem

- Operational Visibility — Monitor user feedback, leads, and assistant profiles directly through your agent for instant reporting

The FlowiseAI MCP Server exposes 12 tools through the Vinkius. Connect it to Cursor in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

All 12 FlowiseAI tools available for Cursor

When Cursor connects to FlowiseAI through Vinkius, your AI agent gets direct access to every tool listed below — spanning llm-workflows, rag-pipelines, chatbot-development, and more. Every call is secured with network, filesystem, subprocess, and code evaluation entitlements inside a sandboxed runtime. Beyond a simple connection, you get a full AI Gateway with real-time visibility into agent activity, enterprise governance, and optimized token usage.

Trigger an LLM flow prediction

Get details for a specific chatflow

Get Flowise server version

List OpenAI-style assistants

List user feedback for a chatflow

List all LLM orchestration flows

List custom tools

List captured leads

List global variables

List configured credentials

List chatflow templates

Push data into a vector store

Connect FlowiseAI to Cursor via MCP

Follow these steps to wire FlowiseAI into Cursor. The entire setup takes under two minutes — your credentials stay safe behind the Vinkius.

Open MCP Settings

Cmd+Shift+P (macOS) or Ctrl+Shift+P (Windows/Linux) → search "MCP Settings"Add the server config

mcp.json file that opensSave the file

Start using FlowiseAI

Why Use Cursor with the FlowiseAI MCP Server

Cursor AI Code Editor provides unique advantages when paired with FlowiseAI through the Model Context Protocol.

Agent mode turns Cursor into an autonomous coding assistant that can read files, run commands, and call MCP tools without switching context

Cursor's Composer feature can generate entire files using real-time data fetched through MCP. no copy-pasting from external dashboards

MCP tools appear alongside built-in tools like file reading and terminal access, creating a unified agentic environment

VS Code extension compatibility means your existing workflow, keybindings, and extensions all work alongside MCP tools

FlowiseAI + Cursor Use Cases

Practical scenarios where Cursor combined with the FlowiseAI MCP Server delivers measurable value.

Code generation with live data: ask Cursor to generate a security report module using live DNS and subdomain data fetched through MCP

Automated documentation: have Cursor query your API's tool schemas and generate TypeScript interfaces or OpenAPI specs automatically

Infrastructure-as-code: Cursor can fetch domain configurations and generate corresponding Terraform or CloudFormation templates

Test scaffolding: ask Cursor to pull real API responses via MCP and generate unit test fixtures from actual data

Example Prompts for FlowiseAI in Cursor

Ready-to-use prompts you can give your Cursor agent to start working with FlowiseAI immediately.

"List all my chatflows in Flowise."

"Execute chatflow 'cf_1' with question: 'How do I reset my password?'"

"Upsert this data into vector store for chatflow 'cf_2': [data]"

Troubleshooting FlowiseAI MCP Server with Cursor

Common issues when connecting FlowiseAI to Cursor through the Vinkius, and how to resolve them.

Tools not appearing in Cursor

Server shows as disconnected

FlowiseAI + Cursor FAQ

Common questions about integrating FlowiseAI MCP Server with Cursor.

What is Agent mode and why does it matter for MCP?

Where does Cursor store MCP configuration?

mcp.json file. You can configure servers at the project level (.cursor/mcp.json in your project root) or globally (~/.cursor/mcp.json). Project-level configs take precedence.