Groq MCP Server for Cursor 8 tools — connect in under 2 minutes

Cursor is an AI-first code editor built on VS Code that integrates LLM-powered coding assistance directly into the development workflow. Its Agent mode enables autonomous multi-step coding tasks, and MCP support lets agents access external data sources and APIs during code generation.

ASK AI ABOUT THIS MCP SERVER

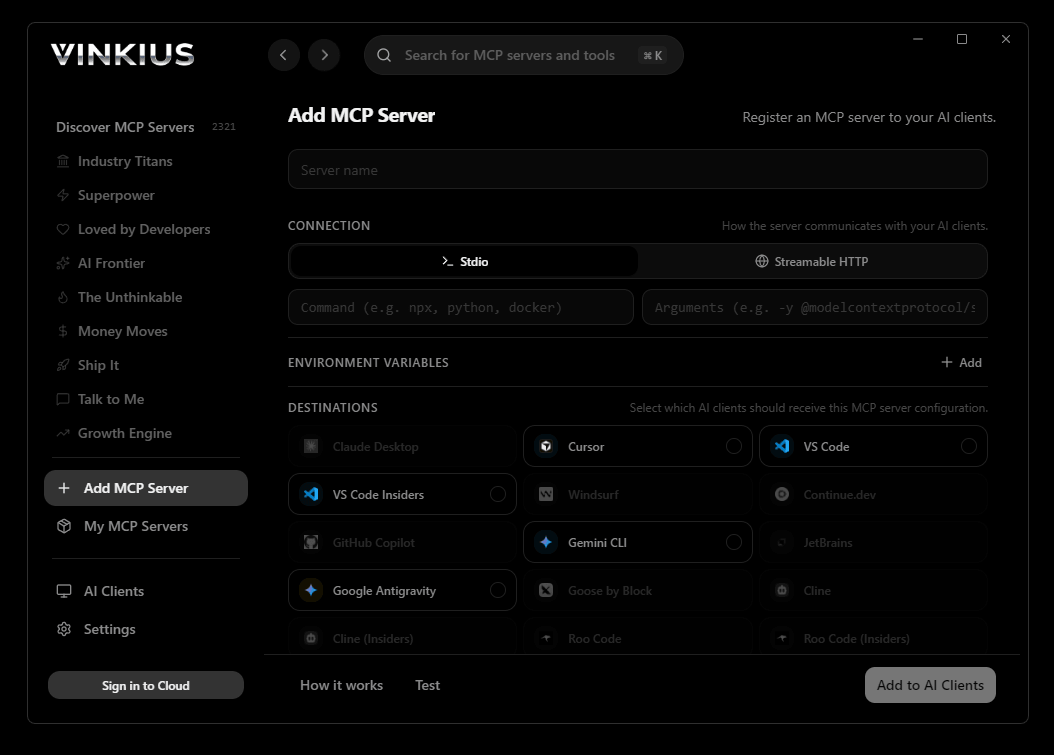

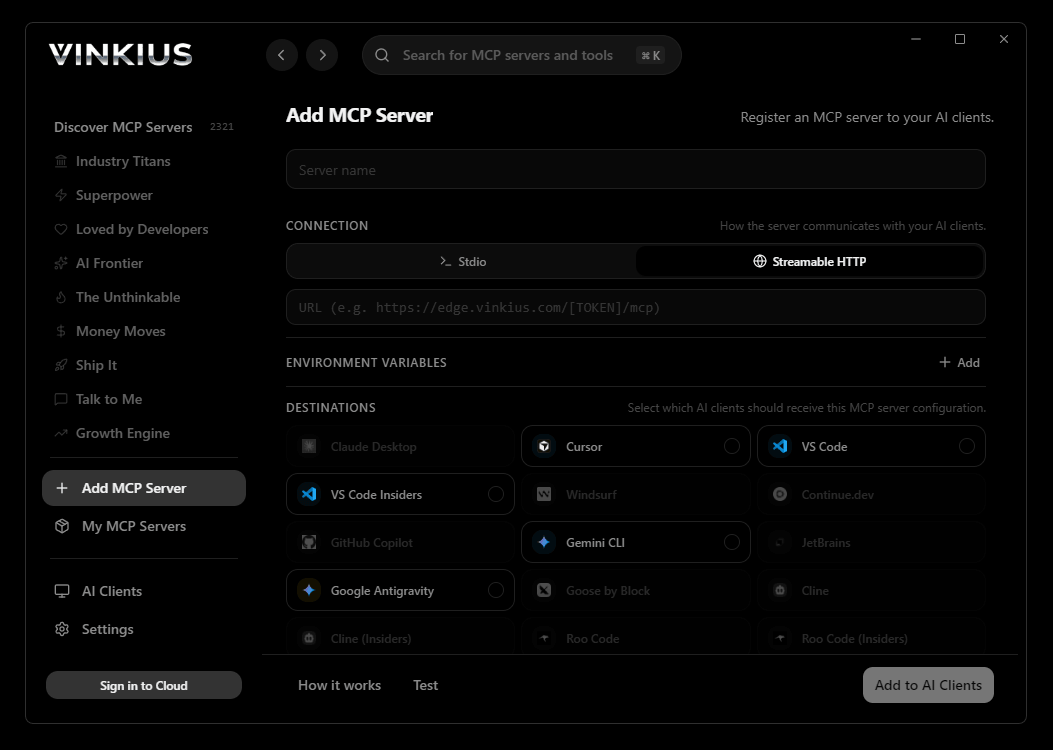

Vinkius supports streamable HTTP and SSE.

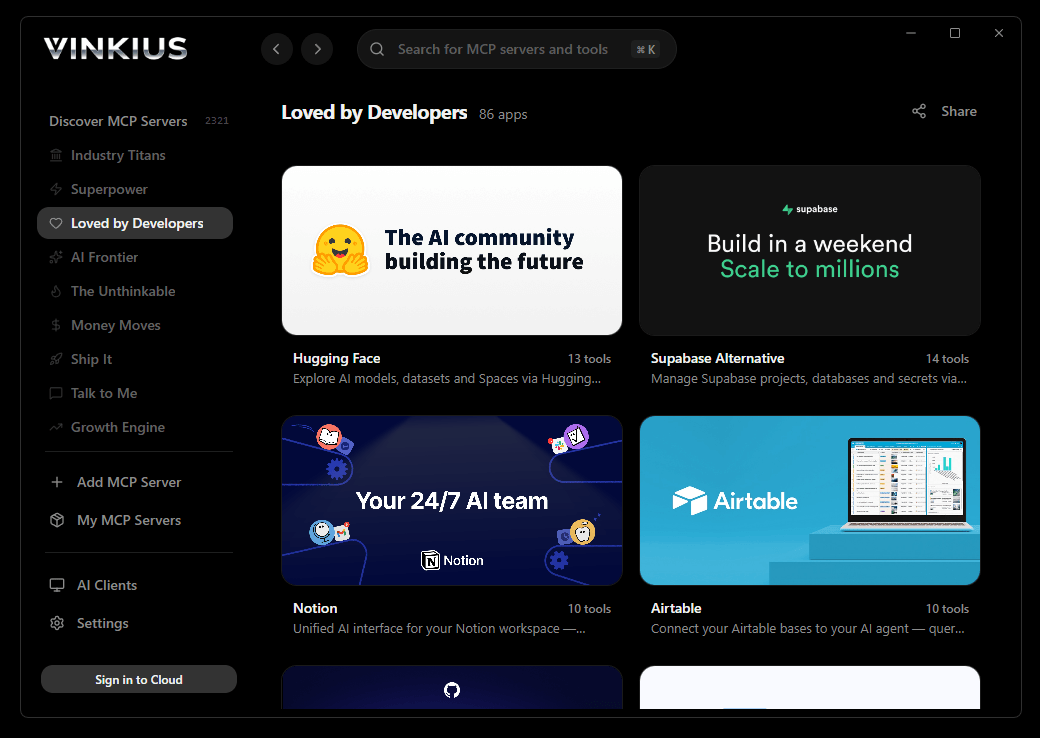

Vinkius Desktop App

The modern way to manage MCP Servers — no config files, no terminal commands. Install Groq and 2,500+ MCP Servers from a single visual interface.

{

"mcpServers": {

"groq": {

"url": "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp"

}

}

}

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About Groq MCP Server

Connect your Groq account to any AI agent and take full control of your high-speed generative AI inference and LPU-accelerated LLM workflows through natural conversation.

Cursor's Agent mode turns Groq into an in-editor superpower. Ask Cursor to generate code using live data from Groq and it fetches, processes, and writes — all in a single agentic loop. 8 tools appear alongside file editing and terminal access, creating a unified development environment grounded in real-time information.

What you can do

- LPU Chat Orchestration — Execute blazing-fast text generation against hardware-accelerated Groq endpoints, utilizing Llama 3, Mixtral, and more flawlessly

- Intelligent Audio Transcription — Parse audio streams into high-accuracy language transcripts utilizing hardware-optimized Whisper models natively

- Cross-Lingual Translation — Evaluate non-English audio files and retrieve immediate translations exclusively into English text synchronousy

- Structured JSON Mode — Constrain AI text inference explicitly to rigid valid JSON formatting to automate data population and system integrations flawlessly

- Tool & Function Calling — Bind external definitions resolving explicit function call JSON architectures to enable your AI agents to interact with tools securely

- Model Discovery — Enumerate available high-speed models and retrieve specific model IDs and versions for precise active inference boundaries natively

- Inference Auditing — Monitor model capabilities and metadata properties to ensure your AI agents are utilizing the most efficient architectural instances synchronousy

The Groq MCP Server exposes 8 tools through the Vinkius. Connect it to Cursor in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect Groq to Cursor via MCP

Follow these steps to integrate the Groq MCP Server with Cursor.

Open MCP Settings

Press Cmd+Shift+P (macOS) or Ctrl+Shift+P (Windows/Linux) → search "MCP Settings"

Add the server config

Paste the JSON configuration above into the mcp.json file that opens

Save the file

Cursor will automatically detect the new MCP server

Start using Groq

Open Agent mode in chat and ask: "Using Groq, help me..." — 8 tools available

Why Use Cursor with the Groq MCP Server

Cursor AI Code Editor provides unique advantages when paired with Groq through the Model Context Protocol.

Agent mode turns Cursor into an autonomous coding assistant that can read files, run commands, and call MCP tools without switching context

Cursor's Composer feature can generate entire files using real-time data fetched through MCP — no copy-pasting from external dashboards

MCP tools appear alongside built-in tools like file reading and terminal access, creating a unified agentic environment

VS Code extension compatibility means your existing workflow, keybindings, and extensions all work alongside MCP tools

Groq + Cursor Use Cases

Practical scenarios where Cursor combined with the Groq MCP Server delivers measurable value.

Code generation with live data: ask Cursor to generate a security report module using live DNS and subdomain data fetched through MCP

Automated documentation: have Cursor query your API's tool schemas and generate TypeScript interfaces or OpenAPI specs automatically

Infrastructure-as-code: Cursor can fetch domain configurations and generate corresponding Terraform or CloudFormation templates

Test scaffolding: ask Cursor to pull real API responses via MCP and generate unit test fixtures from actual data

Groq MCP Tools for Cursor (8)

These 8 tools become available when you connect Groq to Cursor via MCP:

chat_completion

Supports Llama, Mixtral, Gemma models. Generate a chat completion with ultra-fast inference

create_embedding

Create text embeddings

get_model

Get model details

list_models

List available models

moderate_content

Check content for safety

structured_output

Generate structured JSON output

transcribe_audio

Transcribe audio to text

translate_audio

Translate audio to English text

Example Prompts for Groq in Cursor

Ready-to-use prompts you can give your Cursor agent to start working with Groq immediately.

"Ask llama3-70b: 'Write a python function to scrape a website.'"

"Transcribe this audio meeting: https://example.com/meeting.mp3"

"Get model info for 'mixtral-8x7b-32768'"

Troubleshooting Groq MCP Server with Cursor

Common issues when connecting Groq to Cursor through the Vinkius, and how to resolve them.

Tools not appearing in Cursor

Server shows as disconnected

Groq + Cursor FAQ

Common questions about integrating Groq MCP Server with Cursor.

What is Agent mode and why does it matter for MCP?

Where does Cursor store MCP configuration?

mcp.json file. You can configure servers at the project level (.cursor/mcp.json in your project root) or globally (~/.cursor/mcp.json). Project-level configs take precedence.Can Cursor use MCP tools in inline edits?

How do I verify MCP tools are loaded?

Connect Groq with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect Groq to Cursor

Get your token, paste the configuration, and start using 8 tools in under 2 minutes. No API key management needed.