Lambda Labs (GPU Cloud) MCP Server for Windsurf 7 tools — connect in under 2 minutes

Windsurf brings agentic AI coding to a purpose-built IDE. Connect Lambda Labs (GPU Cloud) through the Vinkius and Cascade will auto-discover every tool — ask questions, generate code, and act on live data without leaving your editor.

ASK AI ABOUT THIS MCP SERVER

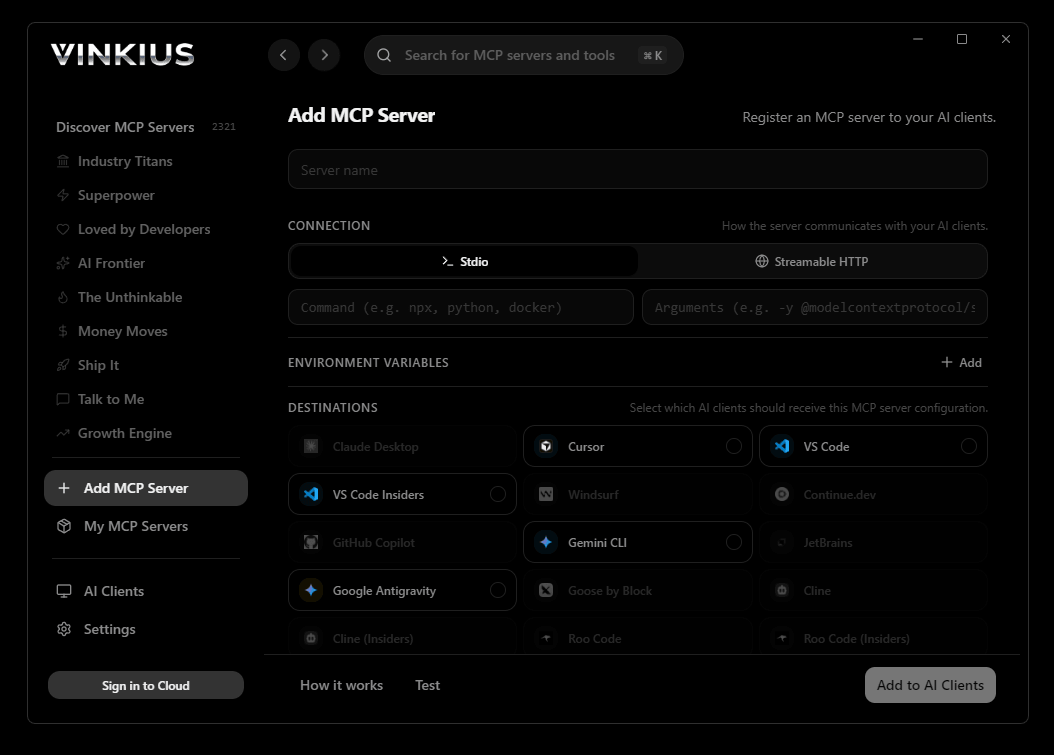

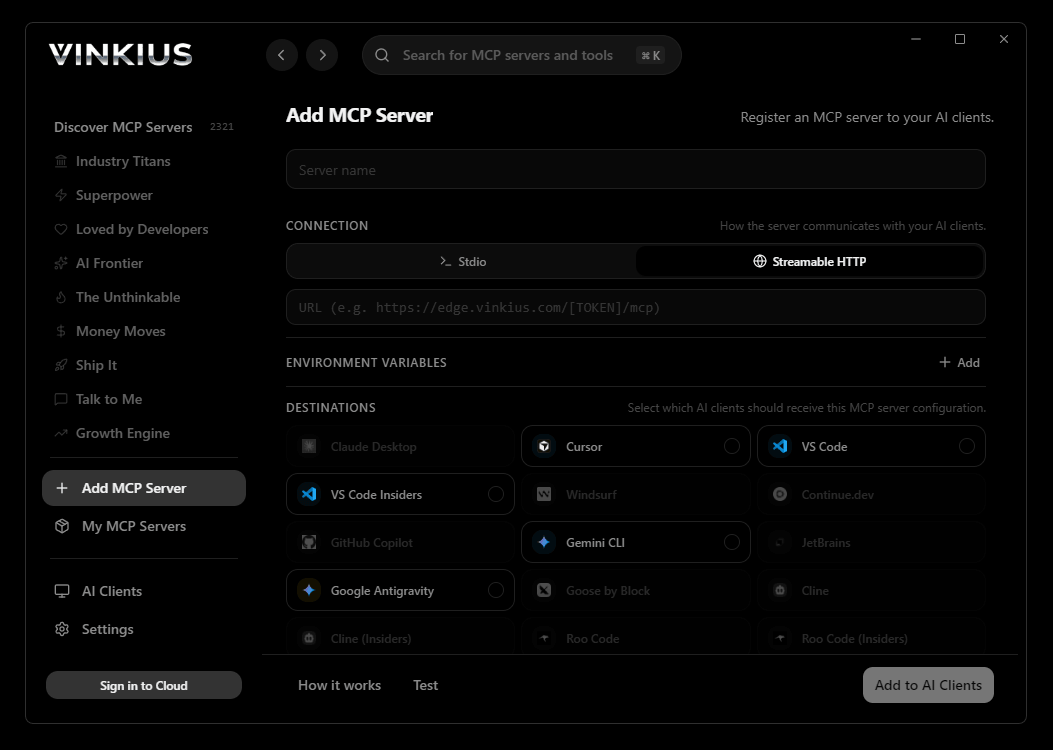

Vinkius supports streamable HTTP and SSE.

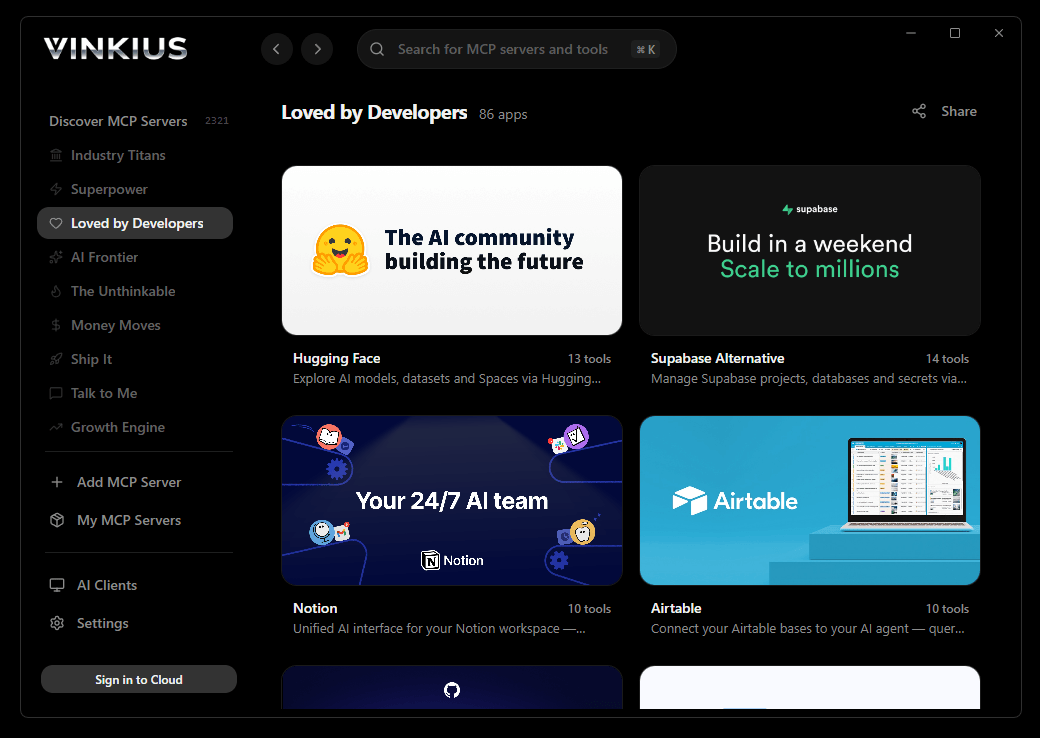

Vinkius Desktop App

The modern way to manage MCP Servers — no config files, no terminal commands. Install Lambda Labs (GPU Cloud) and 2,500+ MCP Servers from a single visual interface.

{

"mcpServers": {

"lambda-labs-gpu-cloud": {

"url": "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp"

}

}

}

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About Lambda Labs (GPU Cloud) MCP Server

Connect your Lambda Labs account to any AI agent and take full control of your AI infrastructure and high-performance GPU orchestration through natural conversation.

Windsurf's Cascade agent chains multiple Lambda Labs (GPU Cloud) tool calls autonomously — query data, analyze results, and generate code in a single agentic session. Paste the Vinkius Edge URL, reload, and all 7 tools are immediately available. Real-time tool feedback appears inline, so you see API responses directly in your editor.

What you can do

- Instance Orchestration — Launch state-of-the-art GPU virtual machines (e.g., H100, A100) and manage their entire lifecycle directly from your agent

- ML Infrastructure Audit — List running instances and retrieve detailed hardware specifications, public IPv4 addresses, and Jupyter Lab access tokens securely

- Inventory & Pricing — Discover available GPU node types and pricing matrices across different regions to optimize your AI training and inference budget

- SSH Key Management — Enumerate globally managed public keys to ensure zero-trust infrastructure provisioning and secure access over port 22

- Storage Mapping — Discover persistent shared NAS volumes living in the Lambda ecosystem that can be mounted simultaneously across multiple worker nodes

- Resource Cleanup — Terminate and deallocate compute nodes instantly to stop billing and maintain a clean cloud footprint

The Lambda Labs (GPU Cloud) MCP Server exposes 7 tools through the Vinkius. Connect it to Windsurf in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect Lambda Labs (GPU Cloud) to Windsurf via MCP

Follow these steps to integrate the Lambda Labs (GPU Cloud) MCP Server with Windsurf.

Open MCP Settings

Go to Settings → MCP Configuration or press Cmd+Shift+P and search "MCP"

Add the server

Paste the JSON configuration above into mcp_config.json

Save and reload

Windsurf will detect the new server automatically

Start using Lambda Labs (GPU Cloud)

Open Cascade and ask: "Using Lambda Labs (GPU Cloud), help me..." — 7 tools available

Why Use Windsurf with the Lambda Labs (GPU Cloud) MCP Server

Windsurf provides unique advantages when paired with Lambda Labs (GPU Cloud) through the Model Context Protocol.

Windsurf's Cascade agent autonomously chains multiple tool calls in sequence, solving complex multi-step tasks without manual intervention

Purpose-built for agentic workflows — Cascade understands context across your entire codebase and integrates MCP tools natively

JSON-based configuration means zero code changes: paste a URL, reload, and all 7 tools are immediately available

Real-time tool feedback is displayed inline, so you see API responses directly in your editor without switching contexts

Lambda Labs (GPU Cloud) + Windsurf Use Cases

Practical scenarios where Windsurf combined with the Lambda Labs (GPU Cloud) MCP Server delivers measurable value.

Automated code generation: ask Cascade to fetch data from Lambda Labs (GPU Cloud) and generate models, types, or handlers based on real API responses

Live debugging: query Lambda Labs (GPU Cloud) tools mid-session to inspect production data while debugging without leaving the editor

Documentation generation: pull schema information from Lambda Labs (GPU Cloud) and have Cascade generate comprehensive API docs automatically

Rapid prototyping: combine Lambda Labs (GPU Cloud) data with Cascade's code generation to scaffold entire features in minutes

Lambda Labs (GPU Cloud) MCP Tools for Windsurf (7)

These 7 tools become available when you connect Lambda Labs (GPU Cloud) to Windsurf via MCP:

get_instance

Get exact details and SSH connection string for a specific instance

launch_instance

g., powerful H100 or A100 boxes). Injects explicit SSH keys into the runtime so it is securely accessible over port 22 immediately upon boot. Provision a new Lambda GPU virtual machine

list_filesystems

Map persistent shared NAS volumes living in the Lambda ecosystem

list_instance_types

Exposes exact catalog configurations of available GPU node types, identifying exactly which regions currently hold physical availability. Discover available Lambda GPU instance specifications and pricing

list_instances

List running GPU instances on Lambda Cloud

list_ssh_keys

Enumerate globally managed SSH public keys in Lambda

terminate_instances

Any ephemeral drives attached will be vaporized immediately without backup. Extremely destructive; stops billing instantly. Permanently terminate and destroy Lambda GPU instances

Example Prompts for Lambda Labs (GPU Cloud) in Windsurf

Ready-to-use prompts you can give your Windsurf agent to start working with Lambda Labs (GPU Cloud) immediately.

"List all my running GPU instances in Lambda Cloud"

"Launch a 1x H100 instance in us-east-1 with my 'default-key' SSH key"

"What are the available instance types and their current pricing?"

Troubleshooting Lambda Labs (GPU Cloud) MCP Server with Windsurf

Common issues when connecting Lambda Labs (GPU Cloud) to Windsurf through the Vinkius, and how to resolve them.

Server not connecting

Lambda Labs (GPU Cloud) + Windsurf FAQ

Common questions about integrating Lambda Labs (GPU Cloud) MCP Server with Windsurf.

How does Windsurf discover MCP tools?

mcp_config.json file on startup and connects to each configured server via Streamable HTTP. Tools are listed in the MCP panel and available to Cascade automatically.Can Cascade chain multiple MCP tool calls?

Does Windsurf support multiple MCP servers?

mcp_config.json. Each server's tools appear in the MCP panel and Cascade can use tools from different servers in a single flow.Connect Lambda Labs (GPU Cloud) with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect Lambda Labs (GPU Cloud) to Windsurf

Get your token, paste the configuration, and start using 7 tools in under 2 minutes. No API key management needed.