Replicate Alternative MCP Server for Vercel AI SDK 12 tools — connect in under 2 minutes

The Vercel AI SDK is the TypeScript toolkit for building AI-powered applications. Connect Replicate Alternative through Vinkius and every tool is available as a typed function. ready for React Server Components, API routes, or any Node.js backend.

ASK AI ABOUT THIS MCP SERVER

Vinkius supports streamable HTTP and SSE.

import { createMCPClient } from "@ai-sdk/mcp";

import { generateText } from "ai";

import { openai } from "@ai-sdk/openai";

async function main() {

const mcpClient = await createMCPClient({

transport: {

type: "http",

// Your Vinkius token. get it at cloud.vinkius.com

url: "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp",

},

});

try {

const tools = await mcpClient.tools();

const { text } = await generateText({

model: openai("gpt-4o"),

tools,

prompt: "Using Replicate Alternative, list all available capabilities.",

});

console.log(text);

} finally {

await mcpClient.close();

}

}

main();

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

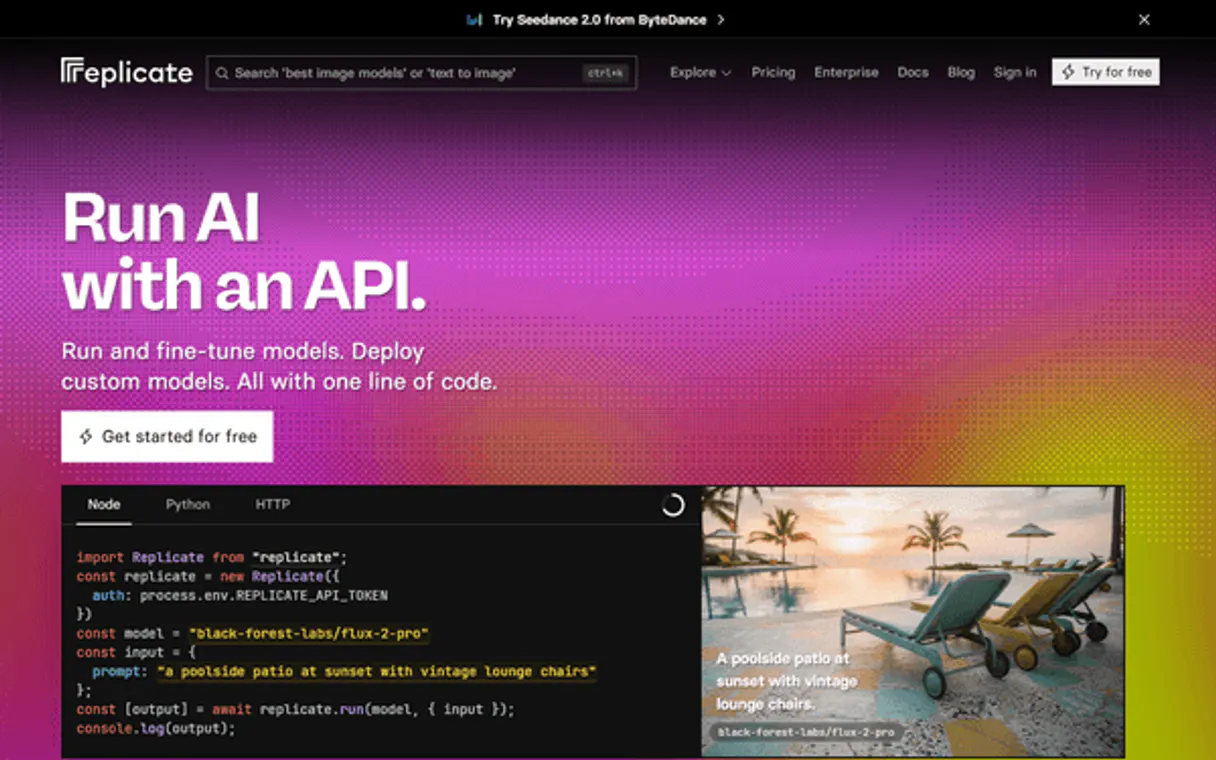

About Replicate Alternative MCP Server

Connect your Replicate account to any AI agent and run thousands of open-source ML models through natural conversation.

The Vercel AI SDK gives every Replicate Alternative tool full TypeScript type inference, IDE autocomplete, and compile-time error checking. Connect 12 tools through Vinkius and stream results progressively to React, Svelte, or Vue components. works on Edge Functions, Cloudflare Workers, and any Node.js runtime.

What you can do

- Model Discovery — Browse, search and inspect thousands of ML models with their descriptions, run counts and hardware requirements

- Predictions — Run models by creating predictions and tracking their status (starting, processing, succeeded, failed)

- Collections — Explore curated collections of models by category (text-to-image, LLMs, audio, video)

- Hardware Options — View available GPU types and pricing for model inference

- Account Info — Check your account details and usage

The Replicate Alternative MCP Server exposes 12 tools through the Vinkius. Connect it to Vercel AI SDK in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect Replicate Alternative to Vercel AI SDK via MCP

Follow these steps to integrate the Replicate Alternative MCP Server with Vercel AI SDK.

Install dependencies

Run npm install @ai-sdk/mcp ai @ai-sdk/openai

Replace the token

Replace [YOUR_TOKEN_HERE] with your Vinkius token

Run the script

Save to agent.ts and run with npx tsx agent.ts

Explore tools

The SDK discovers 12 tools from Replicate Alternative and passes them to the LLM

Why Use Vercel AI SDK with the Replicate Alternative MCP Server

Vercel AI SDK provides unique advantages when paired with Replicate Alternative through the Model Context Protocol.

TypeScript-first: every MCP tool gets full type inference, IDE autocomplete, and compile-time error checking out of the box

Framework-agnostic core works with Next.js, Nuxt, SvelteKit, or any Node.js runtime. same Replicate Alternative integration everywhere

Built-in streaming UI primitives let you display Replicate Alternative tool results progressively in React, Svelte, or Vue components

Edge-compatible: the AI SDK runs on Vercel Edge Functions, Cloudflare Workers, and other edge runtimes for minimal latency

Replicate Alternative + Vercel AI SDK Use Cases

Practical scenarios where Vercel AI SDK combined with the Replicate Alternative MCP Server delivers measurable value.

AI-powered web apps: build dashboards that query Replicate Alternative in real-time and stream results to the UI with zero loading states

API backends: create serverless endpoints that orchestrate Replicate Alternative tools and return structured JSON responses to any frontend

Chatbots with tool use: embed Replicate Alternative capabilities into conversational interfaces with streaming responses and tool call visibility

Internal tools: build admin panels where team members interact with Replicate Alternative through natural language queries

Replicate Alternative MCP Tools for Vercel AI SDK (12)

These 12 tools become available when you connect Replicate Alternative to Vercel AI SDK via MCP:

cancel_prediction

Provide the prediction ID. The prediction status will change to "canceled". Cancel a running prediction

create_prediction

Requires the model slug in "owner/name" format and an input object matching the model's schema. Optionally specify a version ID and webhook URL. Returns the prediction object with its ID, status (starting, processing, succeeded, failed, canceled) and output. Use get_prediction to check status and retrieve results. Run a model prediction on Replicate

get_account

Returns account type, username and usage info. Use this to verify your API token is working correctly. Get the authenticated Replicate account info

get_collection

Provide the collection slug (e.g. "text-to-image", "large-language-models"). Get details for a specific model collection

get_model

Provide the model slug in "owner/name" format (e.g. "stability-ai/sdxl" or "meta/meta-llama-3-70b-instruct"). Get details for a specific Replicate model

get_model_versions

Each version includes its ID (64-char hash), creation date, input/output schema and cog version. Use this to find the correct version ID when creating predictions for models that require a specific version. Get all versions of a Replicate model

get_prediction

Returns the prediction ID, status (starting, processing, succeeded, failed, canceled), input, output URLs, creation time and logs. Use the prediction ID returned from create_prediction. Get the status and result of a prediction

list_collections

Collections group related models by category (e.g. "text-to-image", "large-language-models", "audio-to-audio", "image-to-video"). Each collection includes its slug, name, description and featured models. List model collections on Replicate

list_hardware

Each hardware option includes its SKU name, pricing and specifications. Useful for choosing the right GPU for your prediction workload. List available GPU hardware on Replicate

list_models

Each model includes its name, owner, description, run count, hardware requirements and cover image URL. Use this to discover available models for running predictions. List available ML models on Replicate

list_predictions

Each prediction includes its ID, model, status, creation time and output URLs. Useful for tracking prediction history and monitoring model usage. List recent predictions on Replicate

search_models

Returns models with their name, owner, description, run count and hardware. Useful for finding specific types of models (e.g. "text-to-image", "llm", "music-generation"). Search for models on Replicate by query

Example Prompts for Replicate Alternative in Vercel AI SDK

Ready-to-use prompts you can give your Vercel AI SDK agent to start working with Replicate Alternative immediately.

"List all text-to-image collections on Replicate."

"Search for LLM models on Replicate."

"Create a prediction using stability-ai/sdxl with prompt 'a sunset over mountains, photorealistic'."

Troubleshooting Replicate Alternative MCP Server with Vercel AI SDK

Common issues when connecting Replicate Alternative to Vercel AI SDK through the Vinkius, and how to resolve them.

createMCPClient is not a function

npm install @ai-sdk/mcpReplicate Alternative + Vercel AI SDK FAQ

Common questions about integrating Replicate Alternative MCP Server with Vercel AI SDK.

How does the Vercel AI SDK connect to MCP servers?

createMCPClient from @ai-sdk/mcp and pass the server URL. The SDK discovers all tools and provides typed TypeScript interfaces for each one.Can I use MCP tools in Edge Functions?

Does it support streaming tool results?

useChat and streamText that handle tool calls and display results progressively in the UI.Connect Replicate Alternative with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect Replicate Alternative to Vercel AI SDK

Get your token, paste the configuration, and start using 12 tools in under 2 minutes. No API key management needed.