Helicone (LLM Observability) MCP Server for Claude Desktop 10 tools — connect in under 2 minutes

Claude Desktop is Anthropic's native application for interacting with Claude AI models on macOS and Windows. It was the first consumer application to ship with built-in MCP support, making it the reference implementation for the Model Context Protocol standard.

ASK AI ABOUT THIS MCP SERVER

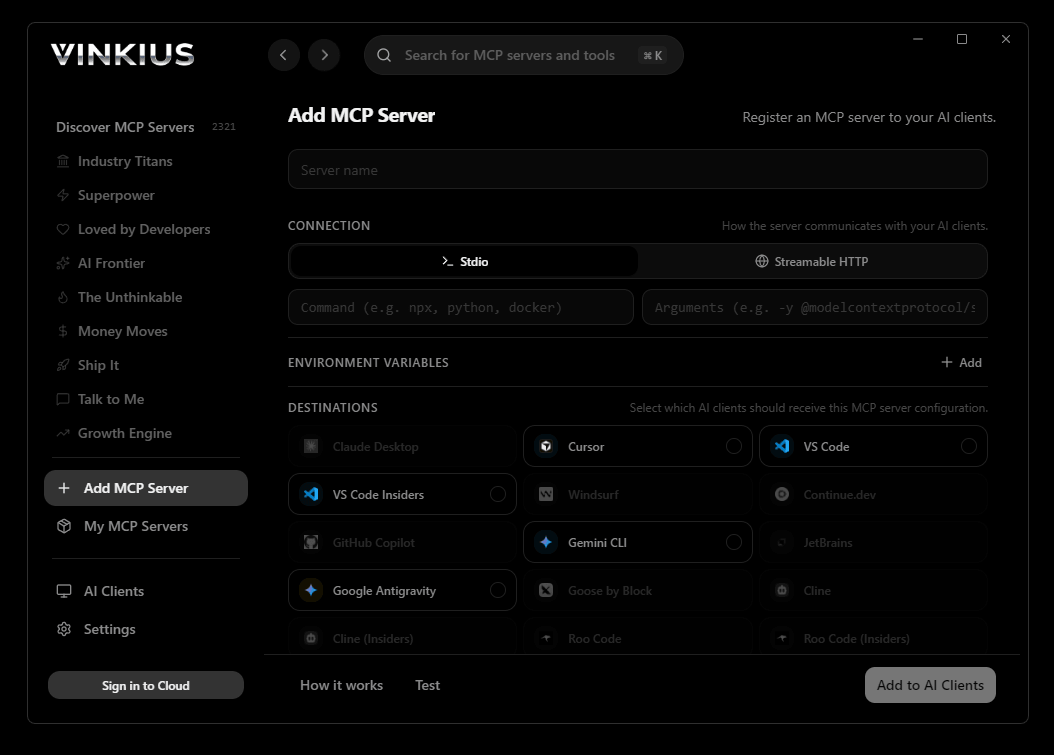

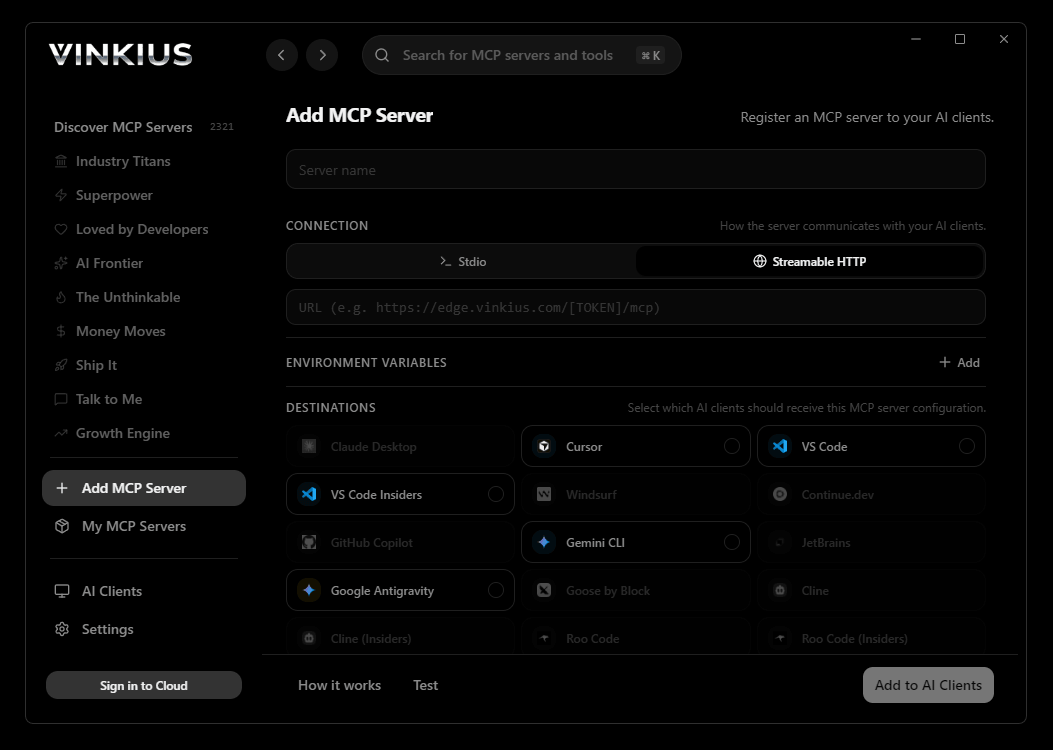

Vinkius supports streamable HTTP and SSE.

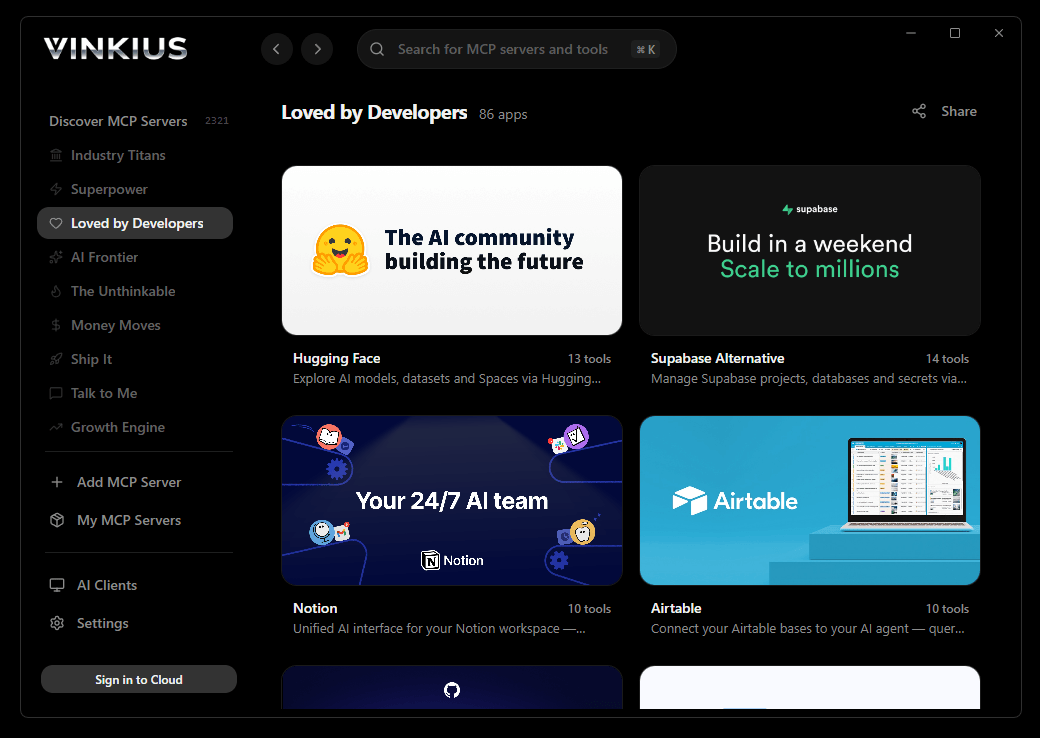

Vinkius Desktop App

The modern way to manage MCP Servers — no config files, no terminal commands. Install Helicone (LLM Observability) and 2,500+ MCP Servers from a single visual interface.

{

"mcpServers": {

"helicone-llm-observability": {

// Your Vinkius token. get it at cloud.vinkius.com

"url": "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp"

}

}

}* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About Helicone (LLM Observability) MCP Server

Connect your Helicone account to any AI agent and take full control of your LLM observability and gateway monitoring through natural conversation.

Claude Desktop is the definitive way to connect Helicone (LLM Observability) to your AI workflow. Add Vinkius Edge URL to your config, restart the app, and Claude immediately exposes all 10 tools in the chat interface. ask a question, Claude calls the right tool, and you see the answer. Zero code, zero context switching.

What you can do

- Request Monitoring — Query deep proxy logs to inspect exact prompts and outputs sent to LLM APIs directly from your agent

- Cost Analysis — Break down spending by model, user, or custom metadata properties to monitor your AI burn rate in real-time

- Latency Optimization — Measure Time To First Token (TTFT) and pinpoint slowness caused by specific upstream LLM providers

- Prompt Management — Access managed prompt versions and track iterative changes in your AI instruction logic natively

- Session Tracing — Isolate and analyze multi-turn graph traces connecting consecutive LLM calls to debug complex agentic workflows

- User Insights — Track precise LLM interactions based on Helicone tags and identify your most active human clients

- Feedback & RLHF — Extract user critiques (Thumbs Up/Down) and log offline Human-in-the-Loop verdicts to improve model grounding

The Helicone (LLM Observability) MCP Server exposes 10 tools through the Vinkius. Connect it to Claude Desktop in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect Helicone (LLM Observability) to Claude Desktop via MCP

Follow these steps to integrate the Helicone (LLM Observability) MCP Server with Claude Desktop.

Open Claude Desktop Settings

Go to Settings → Developer → Edit Config to open claude_desktop_config.json

Add the MCP Server

Paste the configuration above into the mcpServers section

Restart Claude Desktop

Close and reopen Claude Desktop to load the new server

Start using Helicone (LLM Observability)

Look for the 🔌 icon in the chat. your 10 tools are now available

Why Use Claude Desktop with the Helicone (LLM Observability) MCP Server

Claude Desktop by Anthropic provides unique advantages when paired with Helicone (LLM Observability) through the Model Context Protocol.

Claude Desktop is the reference MCP client. it was designed alongside the protocol itself, ensuring the most complete and stable MCP implementation available

Zero-code configuration: add a server URL to a JSON file and Claude instantly discovers and exposes all available tools in the chat interface

Claude's extended thinking capability lets it reason through multi-step tool usage, chaining multiple API calls to answer complex questions

Enterprise-grade security with local config storage. your tokens never leave your machine, and connections go directly to Vinkius Edge network

Helicone (LLM Observability) + Claude Desktop Use Cases

Practical scenarios where Claude Desktop combined with the Helicone (LLM Observability) MCP Server delivers measurable value.

Interactive data exploration: ask Claude to query DNS records, look up WHOIS data, and cross-reference results in a single conversation

Ad-hoc security audits: type a domain name and let Claude enumerate subdomains, check DNS history, and flag configuration anomalies. all through natural language

Executive briefings: generate comprehensive domain intelligence reports by asking Claude to compile findings into a formatted summary

Learning and training: new team members can explore API capabilities conversationally without needing to read documentation

Helicone (LLM Observability) MCP Tools for Claude Desktop (10)

These 10 tools become available when you connect Helicone (LLM Observability) to Claude Desktop via MCP:

get_prompt_versions

Irreversibly vaporize explicit validations extracting rich Churn flags

list_properties

Identify precise active arrays spanning native Gateway auth

log_feedback

Identify precise active arrays spanning native Hold parsing

query_costs

Perform structural extraction of properties driving active Account logic

query_feedback

Inspect deep internal arrays mitigating specific Plan Math

query_latency

Provision a highly-available JSON Payload generating hard Customer bindings

query_prompts

Retrieve explicit Cloud logging tracing explicit Vault limits

query_requests

Identify bounded CRM records inside the Headless Helicone Platform

query_sessions

Enumerate explicitly attached structured rules exporting active Billing

query_users

Dispatch an automated validation check routing explicit Gateway history

Example Prompts for Helicone (LLM Observability) in Claude Desktop

Ready-to-use prompts you can give your Claude Desktop agent to start working with Helicone (LLM Observability) immediately.

"How much did we spend on GPT-4o yesterday?"

"Show me the 10 slowest requests from the last hour"

"List all versions for the 'customer-service-bot' prompt"

Troubleshooting Helicone (LLM Observability) MCP Server with Claude Desktop

Common issues when connecting Helicone (LLM Observability) to Claude Desktop through the Vinkius, and how to resolve them.

Server not appearing after restart

~/Library/Application Support/Claude/claude_desktop_config.json (macOS) or %APPDATA%\\Claude\\ (Windows).Authentication error

Tools not showing in chat

Helicone (LLM Observability) + Claude Desktop FAQ

Common questions about integrating Helicone (LLM Observability) MCP Server with Claude Desktop.

How does Claude Desktop discover MCP tools?

claude_desktop_config.json file and connects to each configured MCP server. It calls the tools/list endpoint to fetch the schema for every available tool, then surfaces them as clickable options in the chat interface via the 🔌 icon.What happens if the MCP server is temporarily unavailable?

Can I connect multiple MCP servers simultaneously?

mcpServers section of the config file. Each server appears as a separate tool provider, and Claude can use tools from multiple servers in a single conversation turn.Is there a limit on the number of tools per server?

Does Claude Desktop support Streamable HTTP transport?

Connect Helicone (LLM Observability) with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect Helicone (LLM Observability) to Claude Desktop

Get your token, paste the configuration, and start using 10 tools in under 2 minutes. No API key management needed.