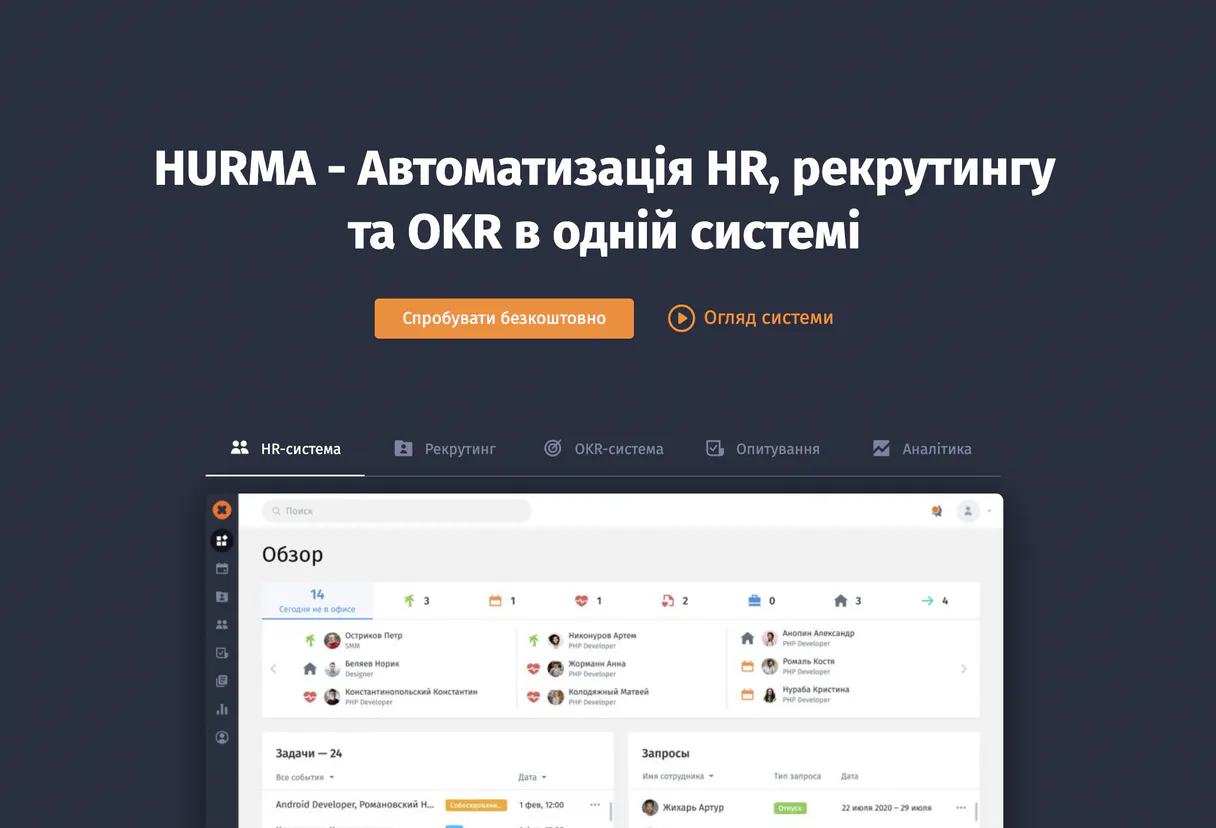

Hurma MCP Server for OpenAI Agents SDKGive OpenAI Agents SDK instant access to 12 tools to Create Candidate, Create Leave Request, Export Overtimes, and more

The OpenAI Agents SDK enables production-grade agent workflows in Python. Connect Hurma through Vinkius and your agents gain typed, auto-discovered tools with built-in guardrails. no manual schema definitions required.

Ask AI about this App Connector for OpenAI Agents SDK

The Hurma app connector for OpenAI Agents SDK is a standout in the Productivity category — giving your AI agent 12 tools to work with, ready to go from day one.

Vinkius delivers Streamable HTTP and SSE to any MCP client

import asyncio

from agents import Agent, Runner

from agents.mcp import MCPServerStreamableHttp

async def main():

# Your Vinkius token. get it at cloud.vinkius.com

async with MCPServerStreamableHttp(

url="https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp"

) as mcp_server:

agent = Agent(

name="Hurma Assistant",

instructions=(

"You help users interact with Hurma. "

"You have access to 12 tools."

),

mcp_servers=[mcp_server],

)

result = await Runner.run(

agent, "List all available tools from Hurma"

)

print(result.final_output)

asyncio.run(main())

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About Hurma MCP Server

Connect your Hurma instance to any AI agent and manage your HR operations through natural conversation.

The OpenAI Agents SDK auto-discovers all 12 tools from Hurma through native MCP integration. Build agents with built-in guardrails, tracing, and handoff patterns. chain multiple agents where one queries Hurma, another analyzes results, and a third generates reports, all orchestrated through Vinkius.

What you can do

- Recruiting Pipeline — List all candidates, inspect profiles, create new candidate records, and track hiring progress

- Employee Directory — Browse all employees with department and position details

- Time-Off Management — Monitor out-of-office schedules and leave requests

- Department Structure — Browse organizational departments

- Position Management — List all job positions

- Onboarding Tracking — Monitor new hire checklists and progress

The Hurma MCP Server exposes 12 tools through the Vinkius. Connect it to OpenAI Agents SDK in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

All 12 Hurma tools available for OpenAI Agents SDK

When OpenAI Agents SDK connects to Hurma through Vinkius, your AI agent gets direct access to every tool listed below — spanning employee-directory, time-off-tracking, onboarding, and more. Every call is secured with network, filesystem, subprocess, and code evaluation entitlements inside a sandboxed runtime. Beyond a simple connection, you get a full AI Gateway with real-time visibility into agent activity, enterprise governance, and optimized token usage.

Create a new candidate

Create a new leave or absence request

Export overtime data

Get details for a specific candidate

Get details for a specific employee

Get employee vacation balance

List recruitment candidates

List custom field definitions

List all company departments

List all employees

List employees currently out of office

List recruitment stages

Connect Hurma to OpenAI Agents SDK via MCP

Follow these steps to wire Hurma into OpenAI Agents SDK. The entire setup takes under two minutes — your credentials stay safe behind the Vinkius.

Install the SDK

pip install openai-agents in your Python environmentReplace the token

[YOUR_TOKEN_HERE] with your Vinkius token from cloud.vinkius.comRun the script

python agent.pyExplore tools

Why Use OpenAI Agents SDK with the Hurma MCP Server

OpenAI Agents SDK provides unique advantages when paired with Hurma through the Model Context Protocol.

Native MCP integration via `MCPServerSse`, pass the URL and the SDK auto-discovers all tools with full type safety

Built-in guardrails, tracing, and handoff patterns let you build production-grade agents without reinventing safety infrastructure

Lightweight and composable: chain multiple agents and MCP servers in a single pipeline with minimal boilerplate

First-party OpenAI support ensures optimal compatibility with GPT models for tool calling and structured output

Hurma + OpenAI Agents SDK Use Cases

Practical scenarios where OpenAI Agents SDK combined with the Hurma MCP Server delivers measurable value.

Automated workflows: build agents that query Hurma, process the data, and trigger follow-up actions autonomously

Multi-agent orchestration: create specialist agents. one queries Hurma, another analyzes results, a third generates reports

Data enrichment pipelines: stream data through Hurma tools and transform it with OpenAI models in a single async loop

Customer support bots: agents query Hurma to resolve tickets, look up records, and update statuses without human intervention

Example Prompts for Hurma in OpenAI Agents SDK

Ready-to-use prompts you can give your OpenAI Agents SDK agent to start working with Hurma immediately.

"Show all candidates in the pipeline and employees out of office this week."

"List all employees in Engineering and create a new candidate for Senior Backend."

"Show onboarding status for new hires and all departments."

Troubleshooting Hurma MCP Server with OpenAI Agents SDK

Common issues when connecting Hurma to OpenAI Agents SDK through the Vinkius, and how to resolve them.

MCPServerStreamableHttp not found

pip install --upgrade openai-agentsAgent not calling tools

Hurma + OpenAI Agents SDK FAQ

Common questions about integrating Hurma MCP Server with OpenAI Agents SDK.

How does the OpenAI Agents SDK connect to MCP?

MCPServerSse(url=...) to create a server connection. The SDK auto-discovers all tools and makes them available to your agent with full type information.Can I use multiple MCP servers in one agent?

MCPServerSse instances to the agent constructor. The agent can use tools from all connected servers within a single run.