LangSmith (LLM Observability & Hub) MCP Server for VS Code Copilot 6 tools — connect in under 2 minutes

GitHub Copilot in VS Code is the most widely adopted AI coding assistant, embedded directly into the world's most popular code editor. With MCP support in Agent mode, Copilot can access external data and APIs to generate context-aware code grounded in real-time information.

ASK AI ABOUT THIS MCP SERVER

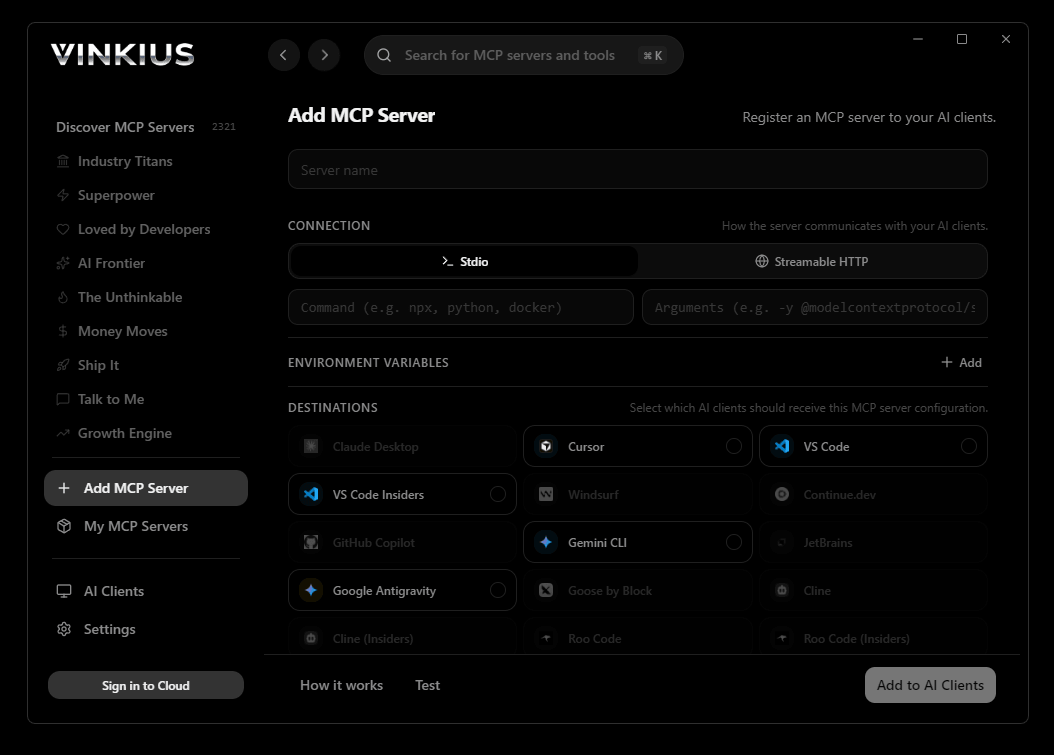

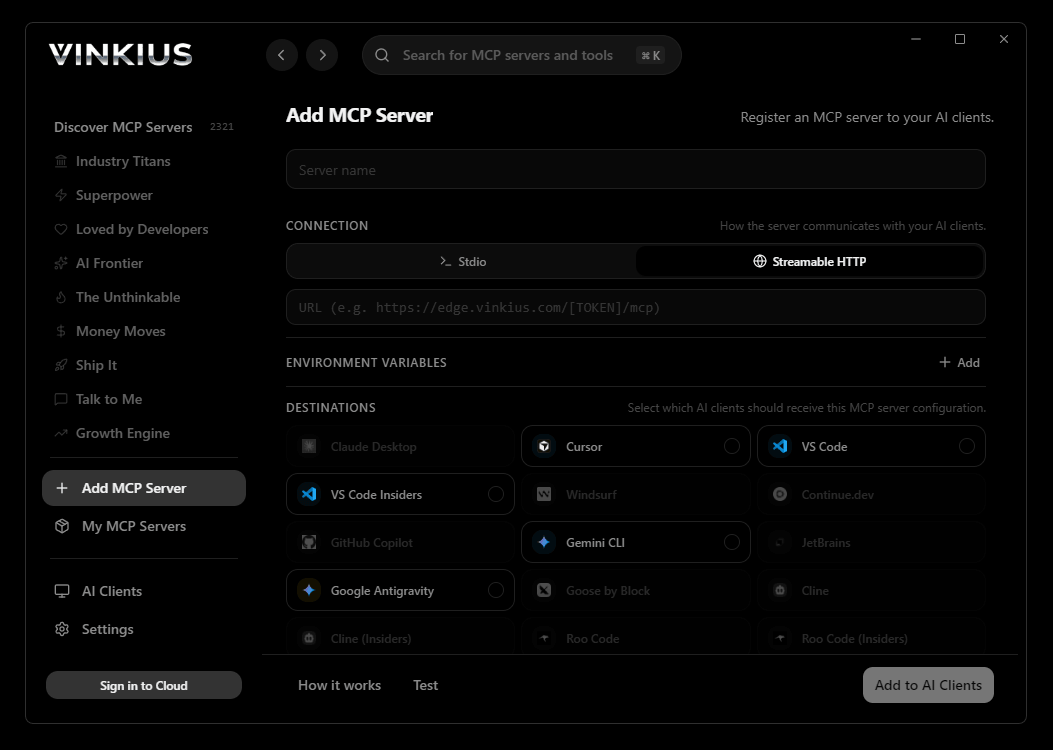

Vinkius supports streamable HTTP and SSE.

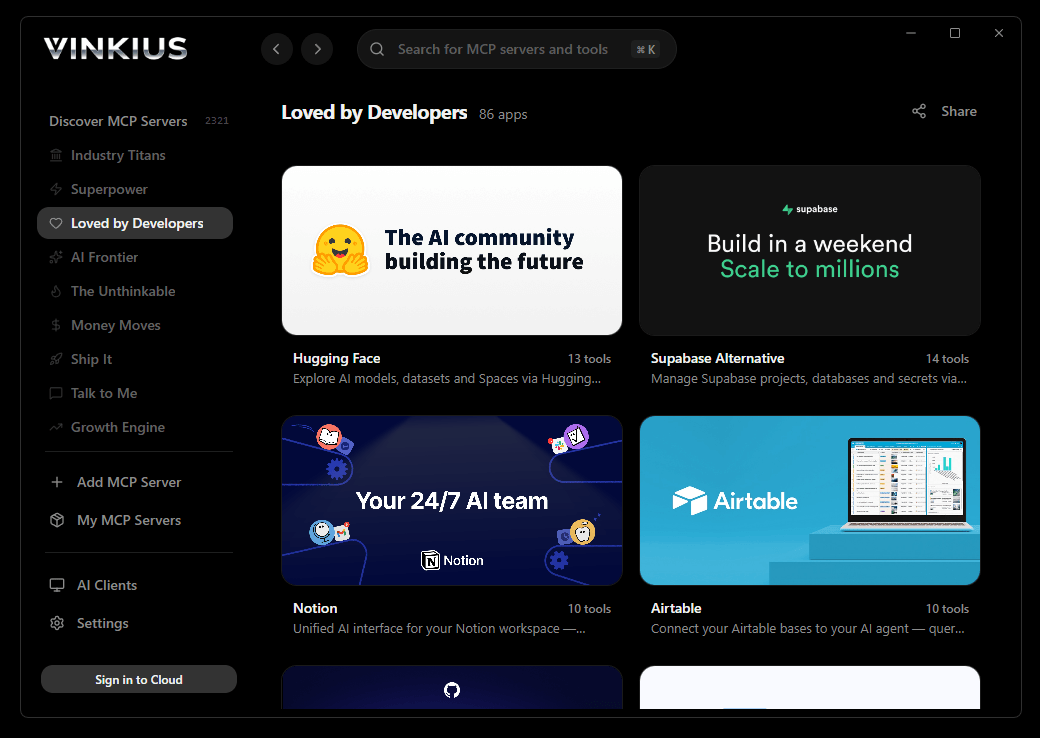

Vinkius Desktop App

The modern way to manage MCP Servers — no config files, no terminal commands. Install LangSmith (LLM Observability & Hub) and 2,500+ MCP Servers from a single visual interface.

{

"mcpServers": {

"langsmith-llm-observability-hub": {

"url": "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp"

}

}

}

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About LangSmith (LLM Observability & Hub) MCP Server

Connect your LangSmith account to any AI agent and take full control of your LLM observability, tracing, and prompt management through natural conversation.

GitHub Copilot Agent mode brings LangSmith (LLM Observability & Hub) data directly into your VS Code workflow. With a project-scoped config, the entire team shares access to 6 tools — Copilot queries live data, generates typed code, and writes tests from actual API responses, all without leaving the editor.

What you can do

- Trace Orchestration — List active tracing projects and retrieve detailed execution logs for specific LLM invocation runs directly from your agent

- Performance Telemetry — Extract precise metrics including token consumption, prompt latency, and exact error strings from your AI pipelines

- Prompt Hub Access — Navigate and retrieve managed prompt templates, variable definitions, and version histories hosted in the LangChain Hub

- Evaluation Datasets — Enumerate curated 'golden' datasets used for automated evaluation of prompt logic or few-shot injection models

- Human-in-the-Loop Audit — Monitor active annotation queues where human reviewers assess the alignment, accuracy, and safety of generated LLM traces

- Agentic Step Analysis — Deep-dive into multi-turn agentic workflows to understand nested tool calls and internal reasoning paths securely

The LangSmith (LLM Observability & Hub) MCP Server exposes 6 tools through the Vinkius. Connect it to VS Code Copilot in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect LangSmith (LLM Observability & Hub) to VS Code Copilot via MCP

Follow these steps to integrate the LangSmith (LLM Observability & Hub) MCP Server with VS Code Copilot.

Create MCP config

Create a .vscode/mcp.json file in your project root

Add the server config

Paste the JSON configuration above

Enable Agent mode

Open GitHub Copilot Chat and switch to Agent mode using the dropdown

Start using LangSmith (LLM Observability & Hub)

Ask Copilot: "Using LangSmith (LLM Observability & Hub), help me..." — 6 tools available

Why Use VS Code Copilot with the LangSmith (LLM Observability & Hub) MCP Server

GitHub Copilot for Visual Studio Code provides unique advantages when paired with LangSmith (LLM Observability & Hub) through the Model Context Protocol.

VS Code is used by over 70% of developers — adding MCP tools to Copilot means your team can leverage external data without leaving their primary editor

Project-scoped MCP configs (`.vscode/mcp.json`) let you commit server configurations to your repository, ensuring the entire team shares the same tool access

Copilot's Agent mode integrates MCP tools seamlessly with file editing, terminal commands, and workspace search in a single agentic loop

GitHub's enterprise compliance and audit features extend to MCP tool usage, providing visibility into how AI interacts with external services

LangSmith (LLM Observability & Hub) + VS Code Copilot Use Cases

Practical scenarios where VS Code Copilot combined with the LangSmith (LLM Observability & Hub) MCP Server delivers measurable value.

Live API integration: Copilot can query an MCP server, inspect the response schema, and generate typed API client code in the same step

DevSecOps workflows: security teams can give developers access to domain intelligence tools directly in their editor for real-time vulnerability assessment during code review

Data pipeline development: Copilot fetches sample data via MCP and generates transformation scripts, validators, and test fixtures from actual API responses

Documentation generation: Copilot queries available tools and auto-generates README sections, API reference docs, and usage examples

LangSmith (LLM Observability & Hub) MCP Tools for VS Code Copilot (6)

These 6 tools become available when you connect LangSmith (LLM Observability & Hub) to VS Code Copilot via MCP:

get_run

Get precise telemetry for a single LLM invocation run

list_annotation_queues

List active human-in-the-loop annotation queues

list_datasets

List all evaluation and fine-tuning datasets mapped in LangSmith

list_projects

Maps out the boundaries of distinct AI pipelines currently monitored by LangSmith. List all active LangSmith tracing projects/sessions

list_prompts

Extract prompt templates hosted in the LangChain Hub

list_runs

Isolates the raw interactions containing prompts sent to and responses received from the AI models. List explicit LLM invocation runs within a specific project

Example Prompts for LangSmith (LLM Observability & Hub) in VS Code Copilot

Ready-to-use prompts you can give your VS Code Copilot agent to start working with LangSmith (LLM Observability & Hub) immediately.

"List all active tracing projects in LangSmith"

"Show me the telemetry for the last run in the 'Production-Bot-V2' project"

"List all prompts hosted in our Hub repository"

Troubleshooting LangSmith (LLM Observability & Hub) MCP Server with VS Code Copilot

Common issues when connecting LangSmith (LLM Observability & Hub) to VS Code Copilot through the Vinkius, and how to resolve them.

MCP tools not available

LangSmith (LLM Observability & Hub) + VS Code Copilot FAQ

Common questions about integrating LangSmith (LLM Observability & Hub) MCP Server with VS Code Copilot.

Which VS Code version supports MCP?

How do I switch to Agent mode?

Can I restrict which MCP tools Copilot can access?

Does MCP work in VS Code Remote or Codespaces?

.vscode/mcp.json work in Remote SSH, WSL, and GitHub Codespaces environments. The MCP connection is established from the remote host, so ensure the server URL is accessible from that environment.Connect LangSmith (LLM Observability & Hub) with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect LangSmith (LLM Observability & Hub) to VS Code Copilot

Get your token, paste the configuration, and start using 6 tools in under 2 minutes. No API key management needed.