New Relic AI (LLM Observability) MCP Server for AutoGen 10 tools — connect in under 2 minutes

Microsoft AutoGen enables multi-agent conversations where agents negotiate, delegate, and execute tasks collaboratively. Add New Relic AI (LLM Observability) as an MCP tool provider through the Vinkius and every agent in the group can access live data and take action.

ASK AI ABOUT THIS MCP SERVER

Vinkius supports streamable HTTP and SSE.

import asyncio

from autogen_agentchat.agents import AssistantAgent

from autogen_ext.tools.mcp import McpWorkbench

async def main():

# Your Vinkius token — get it at cloud.vinkius.com

async with McpWorkbench(

server_params={"url": "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp"},

transport="streamable_http",

) as workbench:

tools = await workbench.list_tools()

agent = AssistantAgent(

name="new_relic_ai_llm_observability_agent",

tools=tools,

system_message=(

"You help users with New Relic AI (LLM Observability). "

"10 tools available."

),

)

print(f"Agent ready with {len(tools)} tools")

asyncio.run(main())

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About New Relic AI (LLM Observability) MCP Server

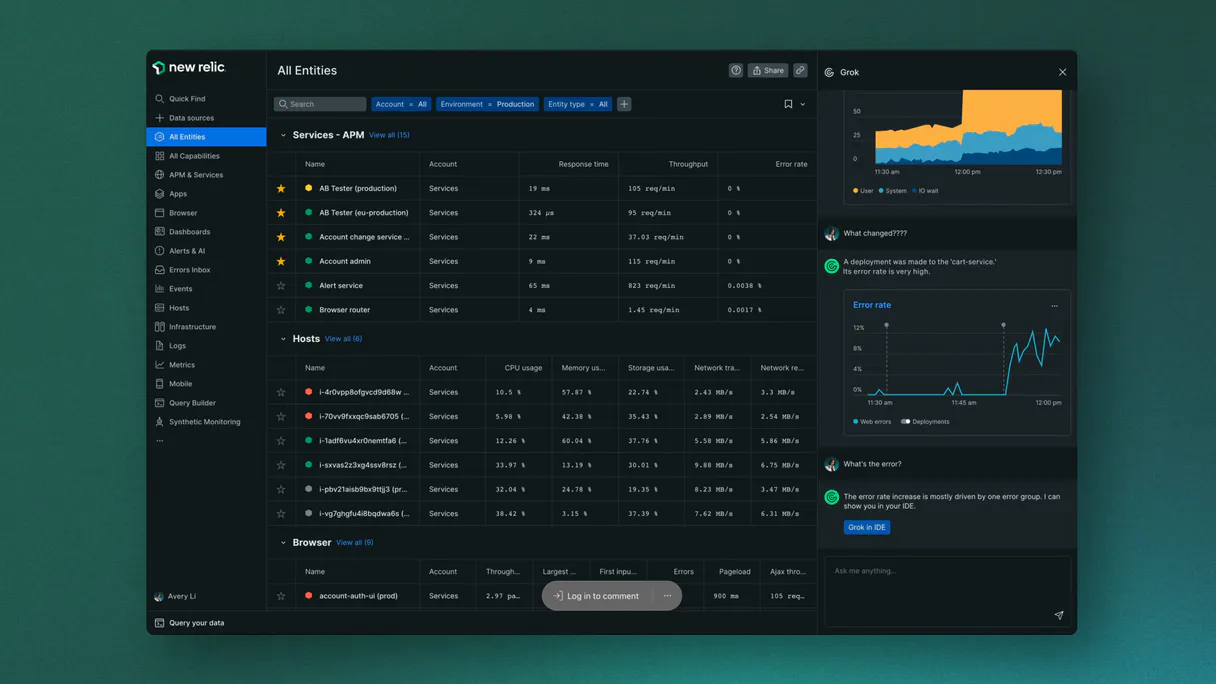

Connect your New Relic AI account to any AI agent and take full control of your LLM observability, token cost tracking, and performance analytics through natural conversation.

AutoGen enables multi-agent conversations where agents negotiate, delegate, and collaboratively use New Relic AI (LLM Observability) tools. Connect 10 tools through the Vinkius and assign role-based access — a data analyst queries while a reviewer validates, with optional human-in-the-loop approval for sensitive operations.

What you can do

- LLM Telemetry Audit — Retrieve detailed LLM chat completion messages and prompt inputs directly from your agent to understand literal model behavior in real-time

- Token Cost Tracking — Execute structural extraction of model costs to calculate exact USD token consumption across your entire AI infrastructure securely

- Performance Monitoring — Extract p95 latency matrices and average response times to ensure your LLM text generation remains performant and sub-second

- User Feedback Loop — Retrieve chronological feedback messages and 1-5 rating scores dumped by human supervisors to identify quality regressions natively

- Custom NRQL Execution — Run sophisticated read-only queries using the New Relic Query Language (NRQL) to extract rich insights from multi-tenant AI datasets instantly

- Custom Event Injection — Post atomic generic telemetry rows to track internal agent states and custom behavioral markers across your observability pipeline

- Resource Discovery — Enumerate active APM apps, dashboards, and alert policies to audit your AI environment's structural health and PagerDuty configurations

The New Relic AI (LLM Observability) MCP Server exposes 10 tools through the Vinkius. Connect it to AutoGen in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect New Relic AI (LLM Observability) to AutoGen via MCP

Follow these steps to integrate the New Relic AI (LLM Observability) MCP Server with AutoGen.

Install AutoGen

Run pip install "autogen-ext[mcp]"

Replace the token

Replace [YOUR_TOKEN_HERE] with your Vinkius token

Integrate into workflow

Use the agent in your AutoGen multi-agent orchestration

Explore tools

The workbench discovers 10 tools from New Relic AI (LLM Observability) automatically

Why Use AutoGen with the New Relic AI (LLM Observability) MCP Server

AutoGen provides unique advantages when paired with New Relic AI (LLM Observability) through the Model Context Protocol.

Multi-agent conversations: multiple AutoGen agents discuss, delegate, and collaboratively use New Relic AI (LLM Observability) tools to solve complex tasks

Role-based architecture lets you assign New Relic AI (LLM Observability) tool access to specific agents — a data analyst queries while a reviewer validates

Human-in-the-loop support: agents can pause for human approval before executing sensitive New Relic AI (LLM Observability) tool calls

Code execution sandbox: AutoGen agents can write and run code that processes New Relic AI (LLM Observability) tool responses in an isolated environment

New Relic AI (LLM Observability) + AutoGen Use Cases

Practical scenarios where AutoGen combined with the New Relic AI (LLM Observability) MCP Server delivers measurable value.

Collaborative analysis: one agent queries New Relic AI (LLM Observability) while another validates results and a third generates the final report

Automated review pipelines: a researcher agent fetches data from New Relic AI (LLM Observability), a critic agent evaluates quality, and a writer produces the output

Interactive planning: agents negotiate task allocation using New Relic AI (LLM Observability) data to make informed decisions about resource distribution

Code generation with live data: an AutoGen coder agent writes scripts that process New Relic AI (LLM Observability) responses in a sandboxed execution environment

New Relic AI (LLM Observability) MCP Tools for AutoGen (10)

These 10 tools become available when you connect New Relic AI (LLM Observability) to AutoGen via MCP:

custom_nrql

Note that NRQL is read-only. Irreversibly vaporize explicit validations extracting rich Churn flags

list_alert_policies

Inspect deep internal arrays mitigating specific Plan Math

list_apm_apps

Dispatch an automated validation check routing explicit Gateway history

list_dashboards

Identify precise active arrays spanning native Gateway auth

post_custom_event

/events` inserting absolute generic `CustomAITelemetry` rows tracking internal agent state. Enumerate explicitly attached structured rules exporting active Billing

query_llm_costs

Perform structural extraction of properties driving active Account logic

query_llm_errors

Identify precise active arrays spanning native Hold parsing

query_llm_events

Identify bounded CRM records inside the Headless New Relic Platform

query_llm_feedback

Retrieve explicit Cloud logging tracing explicit Vault limits

query_llm_latency

Provision a highly-available JSON Payload generating hard Customer bindings

Example Prompts for New Relic AI (LLM Observability) in AutoGen

Ready-to-use prompts you can give your AutoGen agent to start working with New Relic AI (LLM Observability) immediately.

"Show me the last 5 LLM events for the 'OpenAI' vendor"

"What is my total LLM token cost for the last 24 hours?"

"Run NRQL: SELECT count(*) FROM LlmEvent WHERE duration > 2 SINCE 1 hour ago"

Troubleshooting New Relic AI (LLM Observability) MCP Server with AutoGen

Common issues when connecting New Relic AI (LLM Observability) to AutoGen through the Vinkius, and how to resolve them.

McpWorkbench not found

pip install "autogen-ext[mcp]"New Relic AI (LLM Observability) + AutoGen FAQ

Common questions about integrating New Relic AI (LLM Observability) MCP Server with AutoGen.

How does AutoGen connect to MCP servers?

Can different agents have different MCP tool access?

Does AutoGen support human approval for tool calls?

Connect New Relic AI (LLM Observability) with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect New Relic AI (LLM Observability) to AutoGen

Get your token, paste the configuration, and start using 10 tools in under 2 minutes. No API key management needed.