Open Library Alternative MCP Server for Mastra AI 3 tools — connect in under 2 minutes

Mastra AI is a TypeScript-native agent framework built for modern web stacks. Connect Open Library Alternative through Vinkius and Mastra agents discover all tools automatically. type-safe, streaming-ready, and deployable anywhere Node.js runs.

ASK AI ABOUT THIS MCP SERVER

Vinkius supports streamable HTTP and SSE.

import { Agent } from "@mastra/core/agent";

import { createMCPClient } from "@mastra/mcp";

import { openai } from "@ai-sdk/openai";

async function main() {

// Your Vinkius token. get it at cloud.vinkius.com

const mcpClient = await createMCPClient({

servers: {

"open-library-alternative": {

url: "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp",

},

},

});

const tools = await mcpClient.getTools();

const agent = new Agent({

name: "Open Library Alternative Agent",

instructions:

"You help users interact with Open Library Alternative " +

"using 3 tools.",

model: openai("gpt-4o"),

tools,

});

const result = await agent.generate(

"What can I do with Open Library Alternative?"

);

console.log(result.text);

}

main();

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

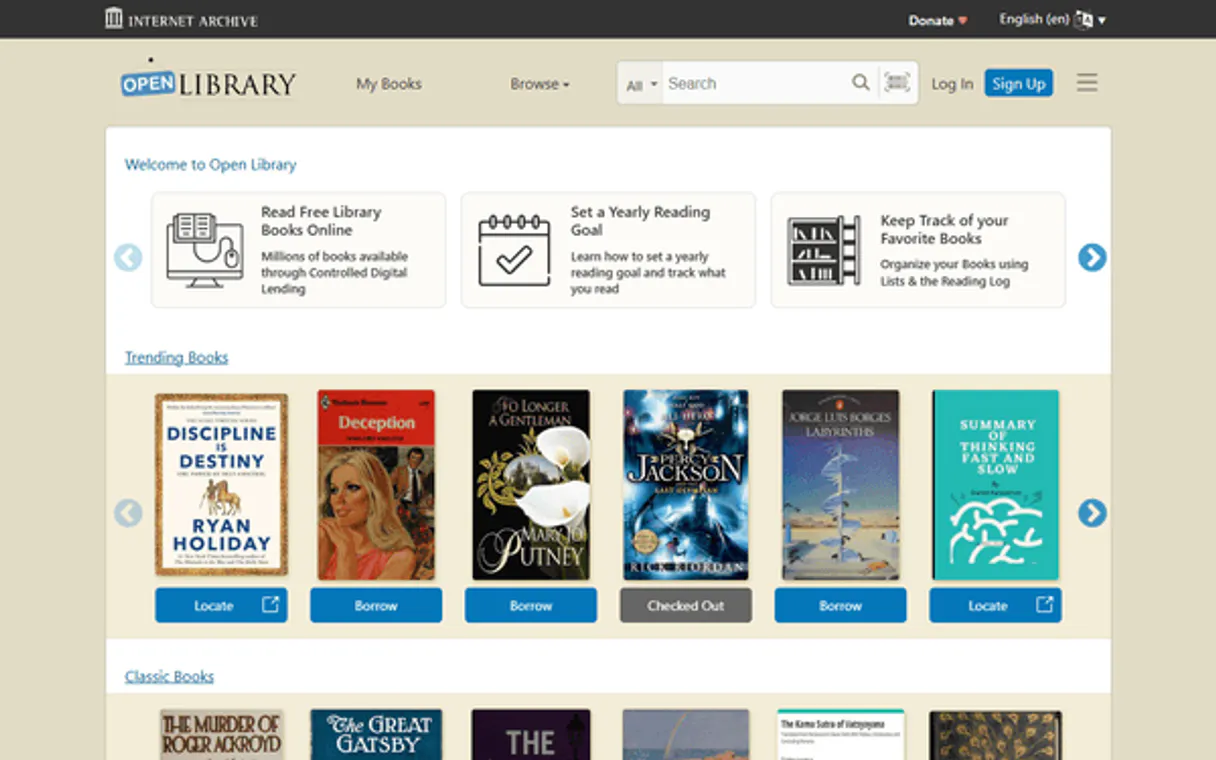

About Open Library Alternative MCP Server

Equip your AI agent with access to one of the world's largest open book databases through the Open Library MCP server. This integration provides real-time access to millions of book records, author biographies, and edition details. Your agent can search for books by title or keyword, retrieve detailed metadata using ISBNs, and explore the complete works of any author. Whether you're a student, researcher, or avid reader, your agent acts as a dedicated digital librarian through natural conversation.

Mastra's agent abstraction provides a clean separation between LLM logic and Open Library Alternative tool infrastructure. Connect 3 tools through Vinkius and use Mastra's built-in workflow engine to chain tool calls with conditional logic, retries, and parallel execution. deployable to any Node.js host in one command.

What you can do

- Comprehensive Search — Find books by title, author, or general keywords across millions of records.

- ISBN Lookup — Retrieve precise metadata for specific editions using ISBN-10 or ISBN-13.

- Author Bibliography — List all works and editions associated with a specific author key.

- Metadata Extraction — Access publication dates, subjects, and classification data for various titles.

- Bibliographic Auditing — Summarize multiple editions or author portfolios for research and cataloging.

The Open Library Alternative MCP Server exposes 3 tools through the Vinkius. Connect it to Mastra AI in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect Open Library Alternative to Mastra AI via MCP

Follow these steps to integrate the Open Library Alternative MCP Server with Mastra AI.

Install dependencies

Run npm install @mastra/core @mastra/mcp @ai-sdk/openai

Replace the token

Replace [YOUR_TOKEN_HERE] with your Vinkius token

Run the agent

Save to agent.ts and run with npx tsx agent.ts

Explore tools

Mastra discovers 3 tools from Open Library Alternative via MCP

Why Use Mastra AI with the Open Library Alternative MCP Server

Mastra AI provides unique advantages when paired with Open Library Alternative through the Model Context Protocol.

Mastra's agent abstraction provides a clean separation between LLM logic and tool infrastructure. add Open Library Alternative without touching business code

Built-in workflow engine chains MCP tool calls with conditional logic, retries, and parallel execution for complex automation

TypeScript-native: full type inference for every Open Library Alternative tool response with IDE autocomplete and compile-time checks

One-command deployment to any Node.js host. Vercel, Railway, Fly.io, or your own infrastructure

Open Library Alternative + Mastra AI Use Cases

Practical scenarios where Mastra AI combined with the Open Library Alternative MCP Server delivers measurable value.

Automated workflows: build multi-step agents that query Open Library Alternative, process results, and trigger downstream actions in a typed pipeline

SaaS integrations: embed Open Library Alternative as a first-class tool in your product's AI features with Mastra's clean agent API

Background jobs: schedule Mastra agents to query Open Library Alternative on a cron and store results in your database automatically

Multi-agent systems: create specialist agents that collaborate using Open Library Alternative tools alongside other MCP servers

Open Library Alternative MCP Tools for Mastra AI (3)

These 3 tools become available when you connect Open Library Alternative to Mastra AI via MCP:

get_author_works

Get all works by an author

get_book_by_isbn

Get book details by ISBN

search_books

Search for books by title or keyword

Example Prompts for Open Library Alternative in Mastra AI

Ready-to-use prompts you can give your Mastra AI agent to start working with Open Library Alternative immediately.

"Search for books by J.R.R. Tolkien on Open Library."

"Get details for the book with ISBN '9780141182704'."

"Find the author ID for 'Gabriel García Márquez'."

Troubleshooting Open Library Alternative MCP Server with Mastra AI

Common issues when connecting Open Library Alternative to Mastra AI through the Vinkius, and how to resolve them.

createMCPClient not exported

npm install @mastra/mcpOpen Library Alternative + Mastra AI FAQ

Common questions about integrating Open Library Alternative MCP Server with Mastra AI.

How does Mastra AI connect to MCP servers?

MCPClient with the server URL and pass it to your agent. Mastra discovers all tools and makes them available with full TypeScript types.Can Mastra agents use tools from multiple servers?

Does Mastra support workflow orchestration?

Connect Open Library Alternative with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect Open Library Alternative to Mastra AI

Get your token, paste the configuration, and start using 3 tools in under 2 minutes. No API key management needed.