TestMonitor MCP Server for LlamaIndex 10 tools — connect in under 2 minutes

LlamaIndex specializes in data-aware AI agents that connect LLMs to structured and unstructured sources. Add TestMonitor as an MCP tool provider through the Vinkius and your agents can query, analyze, and act on live data alongside your existing indexes.

ASK AI ABOUT THIS MCP SERVER

Vinkius supports streamable HTTP and SSE.

import asyncio

from llama_index.tools.mcp import BasicMCPClient, McpToolSpec

from llama_index.core.agent.workflow import FunctionAgent

from llama_index.llms.openai import OpenAI

async def main():

# Your Vinkius token — get it at cloud.vinkius.com

mcp_client = BasicMCPClient("https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp")

mcp_tool_spec = McpToolSpec(client=mcp_client)

tools = await mcp_tool_spec.to_tool_list_async()

agent = FunctionAgent(

tools=tools,

llm=OpenAI(model="gpt-4o"),

system_prompt=(

"You are an assistant with access to TestMonitor. "

"You have 10 tools available."

),

)

response = await agent.run(

"What tools are available in TestMonitor?"

)

print(response)

asyncio.run(main())

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About TestMonitor MCP Server

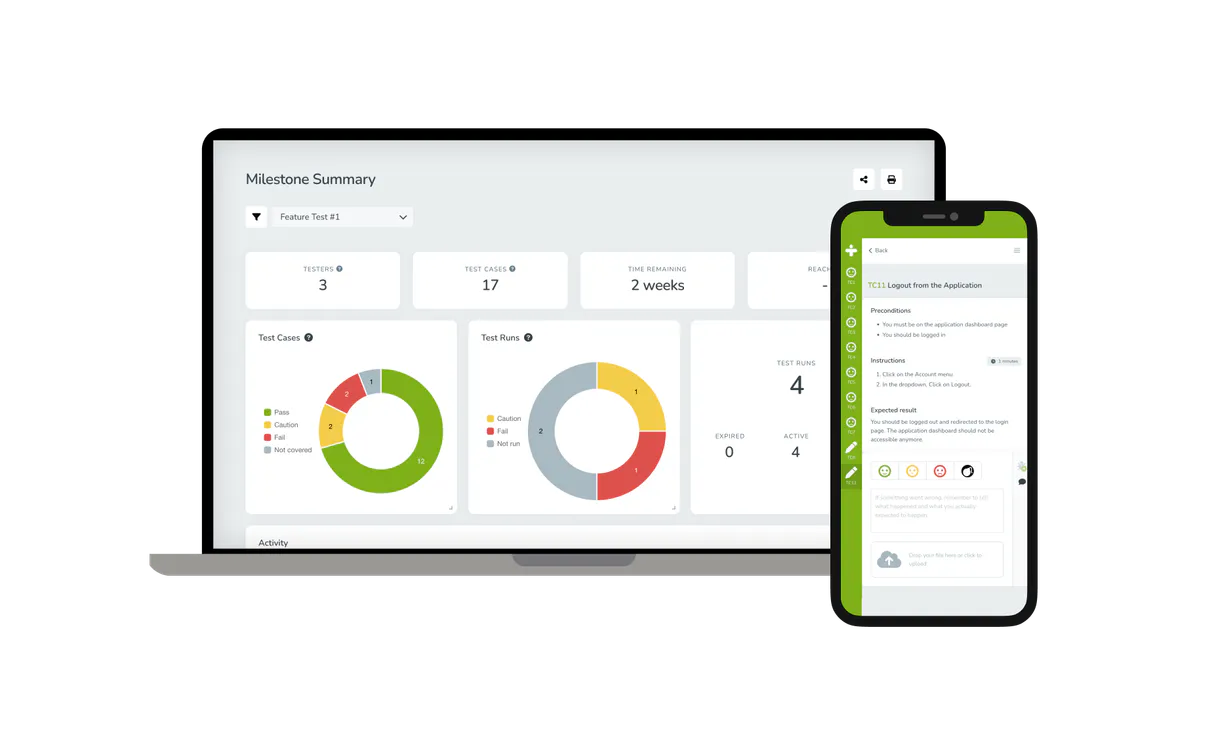

Link up your TestMonitor cloud infrastructure with any AI agent to streamline QA tracking operations and retrieve real-time milestone data without having to navigate web dashboards.

LlamaIndex agents combine TestMonitor tool responses with indexed documents for comprehensive, grounded answers. Connect 10 tools through the Vinkius and query live data alongside vector stores and SQL databases in a single turn — ideal for hybrid search, data enrichment, and analytical workflows.

What you can do

- Project Triage — List all ongoing projects alongside their high-level metadata such as test coverage and delivery status

- Runs & Milestones Tracking — Instantly retrieve project-scoped test runs, milestones lists, and deadline progress

- Defect Auditing — Query all generated issues or software defects explicitly linked to a specific test project

- Requirement Tracing — Ask the agent to map requirements against existing feature specifications without manually matching them in the UI

- Team Management Lookup — Easily list out all the users provisioned in the workspace to confirm roles or debugging ownership

The TestMonitor MCP Server exposes 10 tools through the Vinkius. Connect it to LlamaIndex in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect TestMonitor to LlamaIndex via MCP

Follow these steps to integrate the TestMonitor MCP Server with LlamaIndex.

Install dependencies

Run pip install llama-index-tools-mcp llama-index-llms-openai

Replace the token

Replace [YOUR_TOKEN_HERE] with your Vinkius token

Run the agent

Save to agent.py and run: python agent.py

Explore tools

The agent discovers 10 tools from TestMonitor

Why Use LlamaIndex with the TestMonitor MCP Server

LlamaIndex provides unique advantages when paired with TestMonitor through the Model Context Protocol.

Data-first architecture: LlamaIndex agents combine TestMonitor tool responses with indexed documents for comprehensive, grounded answers

Query pipeline framework lets you chain TestMonitor tool calls with transformations, filters, and re-rankers in a typed pipeline

Multi-source reasoning: agents can query TestMonitor, a vector store, and a SQL database in a single turn and synthesize results

Observability integrations show exactly what TestMonitor tools were called, what data was returned, and how it influenced the final answer

TestMonitor + LlamaIndex Use Cases

Practical scenarios where LlamaIndex combined with the TestMonitor MCP Server delivers measurable value.

Hybrid search: combine TestMonitor real-time data with embedded document indexes for answers that are both current and comprehensive

Data enrichment: query TestMonitor to augment indexed data with live information before generating user-facing responses

Knowledge base agents: build agents that maintain and update knowledge bases by periodically querying TestMonitor for fresh data

Analytical workflows: chain TestMonitor queries with LlamaIndex's data connectors to build multi-source analytical reports

TestMonitor MCP Tools for LlamaIndex (10)

These 10 tools become available when you connect TestMonitor to LlamaIndex via MCP:

get_project_details

Retrieves details for a specific TestMonitor project

get_test_case_details

Retrieves full details for a specific TestMonitor test case

get_test_run_details

Retrieves details for a specific TestMonitor test run

list_account_users

Lists all users associated with the TestMonitor account

list_issues

Lists all issues (defects) within a project

list_milestones

Lists all milestones within a project

list_projects

Project IDs are required for most other tools. Lists all projects available on the TestMonitor instance

list_requirements

Lists all requirements for a project

list_test_cases

Lists all test cases within a specific TestMonitor project

list_test_runs

Lists all test runs within a specific project

Example Prompts for TestMonitor in LlamaIndex

Ready-to-use prompts you can give your LlamaIndex agent to start working with TestMonitor immediately.

"List all TestMonitor projects."

"Get me the details for Test Case ID 5521 from project 8840."

"List all issues for Project 8840."

Troubleshooting TestMonitor MCP Server with LlamaIndex

Common issues when connecting TestMonitor to LlamaIndex through the Vinkius, and how to resolve them.

BasicMCPClient not found

pip install llama-index-tools-mcpTestMonitor + LlamaIndex FAQ

Common questions about integrating TestMonitor MCP Server with LlamaIndex.

How does LlamaIndex connect to MCP servers?

Can I combine MCP tools with vector stores?

Does LlamaIndex support async MCP calls?

Connect TestMonitor with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect TestMonitor to LlamaIndex

Get your token, paste the configuration, and start using 10 tools in under 2 minutes. No API key management needed.