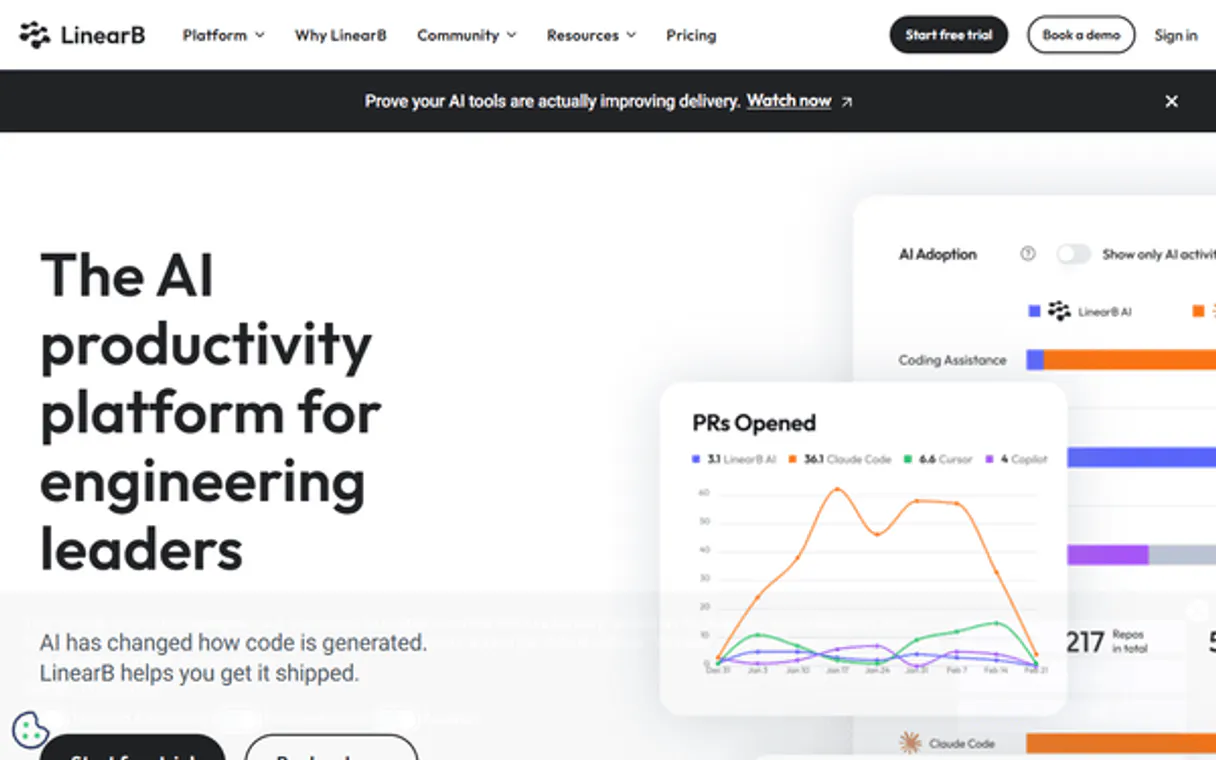

LinearB MCP. Automate DORA metrics and incident reporting.

Works with every AI agent you already use

…and any MCP-compatible client

Just plug in your AI agents and start using Vinkius.

LinearB MCP Server connects your AI agent directly to critical engineering intelligence data. It lets you query DORA metrics, track software deployments via Git refs, and report incidents—all through natural language prompts.

Stop opening dashboards; get real-time operational performance reports instantly.

What your AI agents can do

List connected repos

Lists every repository currently linked to your LinearB account.

List engineering teams

Retrieves a list of all defined engineering teams in the system.

List software deployments

Fetches records of recent software deployments from LinearB.

The agent asks for specific engineering metrics (e.g., cycle time, coding time) and returns a structured analysis of team performance.

You send the tool a repository ID and Git reference, informing LinearB that a new version is live in production.

The agent records a new incident event, specifying the provider and start time to calculate Mean Time To Recovery (MTTR).

You ask for and receive a list of all repositories currently connected to LinearB.

The agent fetches the full roster of teams defined within your LinearB instance.

Ask AI about this MCP

Supported MCP Clients

Waiting for input…

LinearB MCP Server: 7 Tools for DevOps Operations

Use these seven tools to interact with LinearB data through your AI client. Track everything from metrics queries to incident logging.

019d75c7list connected repos

Lists every repository currently linked to your LinearB account.

019d75c7list engineering teams

Retrieves a list of all defined engineering teams in the system.

019d75c7list software deployments

Fetches records of recent software deployments from LinearB.

019d75c7list software incidents

Retrieves a list of logged engineering incidents for review.

019d75c7query software metrics

Queries specific software engineering metrics (like cycle time) using structured JSON input and defined time ranges.

019d75c7record new deployment

Logs a new successful deployment event, requiring the target repository ID and Git reference (SHA or tag).

019d75c7record new incident

Creates an incident record, needing a provider ID and the exact time the failure started.

Choose How to Get Started

Build a custom MCP for your own tools, or connect a ready-made integration from our catalog.

Build Your Own

Turn any API into an MCP. Import a spec, define Agent Skills, or deploy with MCPFusion.

- Import from OpenAPI, Swagger, or YAML specs

- Create Agent Skills with progressive disclosure

- Deploy to edge with MCPFusion framework

- Built in DLP, auth, and compliance on every call

- Real time usage dashboard and cost metering

- Publish to catalog or keep private

Make Your AI Do More

Start with LinearB, then connect any of our 4,700+ other servers whenever your AI needs more. One click, no limits.

- Use this MCP plus 4,700+ others, all in one place

- Add new capabilities to your AI anytime you want

- Every connection is secured and compliant automatically

- Track usage and costs across all your servers

- Works with Claude, ChatGPT, Cursor, and more

- New servers added to the catalog every week

What you can do with this MCP connector

Connect your AI agent directly to LinearB for engineering intelligence. It lets you pull DORA metrics, track deployments via Git refs, and log incidents—all through natural language prompts. You stop opening dashboards; your agent gets real-time operational reports instantly.

Querying Performance Metrics: Your agent asks for specific software performance data, like cycle time or coding time across defined teams. It uses structured JSON input and specific time ranges to return a detailed analysis of team throughput via the query_software_metrics tool. You can get an immediate read on where your bottlenecks are.

Inventory and Team Identification: To start, you'll first use the list_connected_repos tool to pull every repository currently linked to LinearB. If you need organizational context, the agent runs list_engineering_teams, giving you a full roster of all defined groups in the system.

Tracking Deployments: You can check recent software deployments by running list_software_deployments. When something actually goes live, your agent reports it using record_new_deployment. This requires you to pass both the target repository ID and the specific Git reference (that's an SHA or a tag) so LinearB logs the successful rollout.

Logging Incidents: To keep your operational metrics accurate, the agent runs list_software_incidents to show all logged engineering failures for review. If something breaks right now, you use record_new_incident. This tool needs two things: a provider ID and the exact time the failure started so it can accurately calculate Mean Time To Recovery (MTTR).

This server gives your agent full visibility into what's running, who owns it, how fast teams are moving, and where everything breaks down.

How LinearB MCP Works

- 1 First, subscribe to the server and provide your LinearB Public API Key.

- 2 Next, you tell your AI client what data you need—for example: 'What's the average cycle time for Team X over the last 30 days.'

- 3 The agent uses the appropriate tool (e.g.,

query_software_metrics) to pull the raw data and presents it back to you in a clean, natural language summary.

The bottom line is that your AI client handles the API calls; you just ask for what you need.

Who Is LinearB MCP For?

The Engineering Manager who's tired of manually cross-referencing cycle time data across three different dashboards. The DevOps Engineer running a release pipeline that needs to log deployment success automatically. And the CTO who needs an instant, auditable view of DORA metrics without opening the main dashboard.

Runs automated scripts or CI/CD jobs that need to log deployments (record_new_deployment) or report incidents (record_new_incident) directly into LinearB.

Queries team performance metrics, like average cycle time, by simply asking the agent a question instead of building a complex query in the web UI.

Audits organizational health and DORA compliance. They ask for aggregated reports to quickly identify bottlenecks across multiple teams.

What Changes When You Connect

- Stop building custom metric dashboards. Using

query_software_metricslets your agent pull complex data—like separating coding time from pickup time—with a single prompt, giving you instant insights into delivery bottlenecks. - Keep your operational records accurate by using

record_new_incident. When an outage happens, the AI client logs it immediately, ensuring your MTTR metrics are correct and auditable. - Manage releases without manual input. If your CI/CD pipeline finishes a build, running

record_new_deploymentinstantly updates LinearB with the Git ref, linking the code change to performance data. - Understand your scope at a glance. Use

list_connected_reposandlist_engineering_teamsto map out exactly which systems are reporting metrics—perfect for onboarding new teams or auditing permissions. - Get historical context fast. Instead of digging through pages, calling

list_software_incidentsgives you a rapid history of past outages, letting you track how often certain providers fail.

Real-World Use Cases

Auditing Team Performance Post-Quarterly Review

A CTO needs to know if the Backend team is slowing down. Instead of opening LinearB and running multiple reports, they prompt their agent: 'What's the average cycle time for the Backend team over the last 90 days?' The agent uses query_software_metrics and delivers a concise answer in seconds.

Automating CI/CD Release Logging

The DevOps Engineer's pipeline finishes a successful build (SHA: abc123). Instead of having a human manually log it, the script calls record_new_deployment. This instantly updates LinearB, linking the performance data to that specific code commit.

Logging an Unexpected Production Outage

A critical service fails at 2 am. The on-call engineer opens their chat interface and asks their agent: 'Log a new incident for AuthService starting now.' The agent uses record_new_incident, capturing the start time needed to calculate MTTR.

Mapping Out Service Dependencies

A new manager joins the team and needs to understand what services exist. They run 'List all connected repositories,' using list_connected_repos. The agent provides a clean list, letting them grasp the full technical scope immediately.

The Tradeoffs

Asking for Metrics Without Timeframes

Prompting: 'What is the cycle time?' without specifying dates or teams.

→

The agent needs more context. Always specify parameters using query_software_metrics. Example: 'Query software metrics for team X between [start date] and [end date].'

Trying to Log Data Manually

A human manually reading a deployment log and trying to remember the SHA or tag.

→

Use automated processes. When a deploy happens, call record_new_deployment from your CI/CD script using the exact Git ref provided by the build system.

Assuming Incidents are Automatic

Thinking that LinearB automatically tracks every outage without intervention.

→

You must log failures. Use record_new_incident immediately when an issue starts, providing the provider ID and the accurate start time.

When It Fits, When It Doesn't

Use this MCP Server if your primary need is to query or modify structured operational data—metrics, deployments, incidents—that already live in LinearB. You want your AI agent to act like a thin wrapper around your existing API layer.

Don't use this if you just need unstructured search (use an indexed knowledge base tool instead). Also, don't rely on it for general chat or documentation; it only handles defined metrics and events. If the data source is outside of LinearB entirely, this server won't help—you’ll need a different connector that points to that specific platform.

Independent Platform Disclaimer: Vinkius is an independent platform and is not affiliated with, endorsed by, sponsored by, verified by, or otherwise authorized by LinearB. All third-party trademarks, logos, and brand names are the property of their respective owners. Their use on this website is strictly for informational purposes to identify service compatibility and interoperability.

VINKIUS INFRASTRUCTURE

Cloud Hosted

Managed infra

V8 Isolated

Sandboxed per request

Zero-Trust Proxy

No stored credentials

DLP Enforced

Policy on every call

GDPR Compliant

EU data residency

Token Compression

~60% cost reduction

Works with Claude, ChatGPT, Cursor, and more

The Model Context Protocol standardizes how applications expose capabilities to LLMs. Instead of operating in isolation, your AI gains direct access to external platforms, live data, and real-world actions through secure, standardized connections.

This server provides 7 capabilities that interface natively with Claude, ChatGPT, Cursor, and any MCP client. No middleware. No custom integration required.

Available Capabilities

Dashboard fatigue shouldn't require three tabs open.

Today, checking team performance means opening the LinearB dashboard. Then you navigate to 'Metrics,' select the correct time range—usually 30 days back—filter by department, and finally run a report on cycle time. It's slow, it's click-heavy, and it takes context switching just to answer a simple question.

With this MCP server, your agent handles all that overhead. You simply ask: 'What was the average cycle time for the QA team last month?' The agent runs `query_software_metrics`, gets the raw data, and spits out the final number in one prompt. It cuts the process down to two steps.

LinearB MCP Server: Log deployments & incidents instantly.

Before this, logging a new deployment required either navigating to a specific API endpoint or manually updating an internal spreadsheet. If the pipeline failed to write that record, your DORA metrics would be incomplete, and your audit trail was broken.

Now, your agent handles it. You run `record_new_deployment` directly from your IDE or chat interface. The data gets logged reliably and immediately. Your operational intelligence stays current without you lifting a finger.

Common Questions About LinearB MCP

How do I query cycle time using the LinearB MCP Server? +

You use query_software_metrics. You must provide a JSON body specifying the desired metrics (like cycle_time) and the exact time range you want to check.

What is needed to record a new deployment via LinearB? +

You use record_new_deployment. This tool requires two specific pieces of information: the repository ID and the Git reference (the SHA or tag) for that release.

Can I list all my connected repositories with this server? +

Yes, run list_connected_repos. This tool pulls a clean roster of every single repository currently linked to your LinearB account for auditing purposes.

Which tool should I use if an incident occurs right now? +

Use record_new_incident immediately. It requires the provider ID and the exact start time of the failure so it can correctly calculate MTTR when the investigation is done.

How do I authenticate my AI agent when using any tool on LinearB? +

You must provide a valid LinearB Public API Key. This key is entered into your client's configuration settings, which grants your agent access to all available tools. Always treat this key like a password.

If I need to query metrics for a specific team, what should I use first with `list_engineering_teams`? +

Use the list_engineering_teams tool first. This retrieves all defined team names and their associated IDs within LinearB. You must supply these exact IDs when calling query_software_metrics to get accurate data.

Is there a rate limit if I call `record_new_deployment` many times in one minute? +

Yes, the server enforces standard API rate limits. If you exceed them, your agent will receive a 429 error response. You’ll need to implement an exponential backoff strategy in your calling code.

What's the difference between using `list_software_deployments` and running a metric query? +

Listing deployments shows raw, historical records (SHA or tag) for specific releases. Running a metric query calculates averages—like cycle time or coding time—across multiple deployments within defined date ranges.

How do I query cycle time for a specific team? +

Use the query_software_metrics tool and include the team name or ID in the group_by parameter of your JSON query.

What is the difference between coding_time and pickup_time? +

Coding time is the duration from the first commit to the PR creation. Pickup time is the duration from the PR creation to the first review activity.

Can I report a release from the agent? +

Absolutely. Use the record_new_deployment tool with the Git SHA or tag and the repository ID to inform LinearB that a deployment has occurred.

Use it with your favorite AI tools

Connect this server to Cursor, Claude, VS Code, and more.

More in this category

QuickNode

Manage blockchain infrastructure via QuickNode — create data streams, configure webhooks, and interact with RPC nodes directly from your AI agent.

Vercel

Bring your Vercel deployment infrastructure into chat. Control project domains, trigger manual builds, and inspect deployment status natively.

WordPress

Manage posts, pages, and media on WordPress — the world's most popular open-source content management system.

You might also like

Alphamoon

Extract data from documents using AI-powered OCR and intelligent document processing for contracts, invoices, and forms.

Dubsado

Run your creative business with proposal templates, contract signing, invoicing, and client scheduling in one seamless flow.

TollGuru

Calculate tolls and trip costs via TollGuru — get toll plaza details, fuel costs, and route optimization for any route across 50+ countries from any AI agent.