Runn MCP Server for Vercel AI SDK 12 tools — connect in under 2 minutes

The Vercel AI SDK is the TypeScript toolkit for building AI-powered applications. Connect Runn through Vinkius and every tool is available as a typed function. ready for React Server Components, API routes, or any Node.js backend.

ASK AI ABOUT THIS MCP SERVER

Vinkius supports streamable HTTP and SSE.

import { createMCPClient } from "@ai-sdk/mcp";

import { generateText } from "ai";

import { openai } from "@ai-sdk/openai";

async function main() {

const mcpClient = await createMCPClient({

transport: {

type: "http",

// Your Vinkius token. get it at cloud.vinkius.com

url: "https://edge.vinkius.com/[YOUR_TOKEN_HERE]/mcp",

},

});

try {

const tools = await mcpClient.tools();

const { text } = await generateText({

model: openai("gpt-4o"),

tools,

prompt: "Using Runn, list all available capabilities.",

});

console.log(text);

} finally {

await mcpClient.close();

}

}

main();

* Every MCP server runs on Vinkius-managed infrastructure inside AWS - a purpose-built runtime with per-request V8 isolates, Ed25519 signed audit chains, and sub-40ms cold starts optimized for native MCP execution. See our infrastructure

About Runn MCP Server

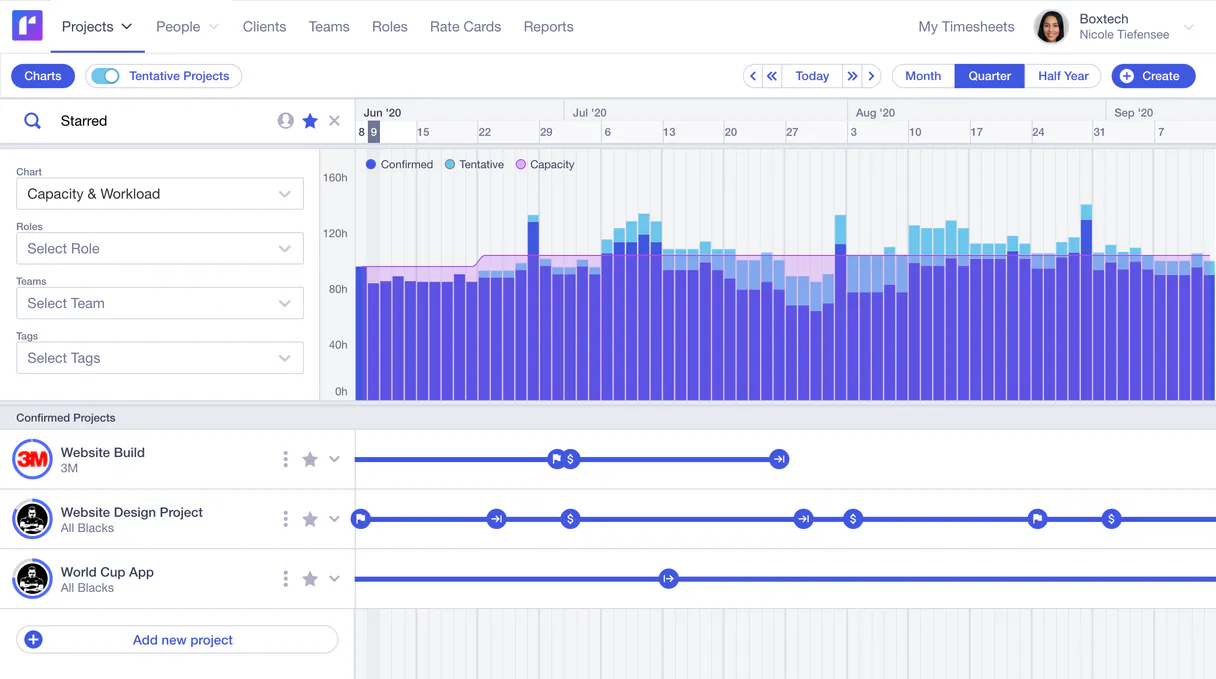

Integrate your conversational AI natively with Runn, the premier real-time resource planning and forecasting platform. This integration enables your assistant to pull essential project metadata, capacity bottlenecks, people configurations, team allocations, and timesheet metrics directly into your sessions.

The Vercel AI SDK gives every Runn tool full TypeScript type inference, IDE autocomplete, and compile-time error checking. Connect 12 tools through Vinkius and stream results progressively to React, Svelte, or Vue components. works on Edge Functions, Cloudflare Workers, and any Node.js runtime.

What you can do

- Analyze Projects & Resources — Extract ongoing engagement details, milestones, and client associations by querying lists natively (

list_projects,list_clients). Request detailed readouts of individual operational scopes (get_project). - Audit Roles & Assignments — Find exactly who is assigned to what phase, mapping active allocations accurately (

list_assignments,list_phases). Consult team members' details (list_people,get_person) or review globally defined roles (list_roles). - Review Budgets & Actuals — Safely extract reported operational logs (

list_actuals) to compare planned work versus billed hours. Account for non-working days naturally via the holidays lists (list_holidays).

The Runn MCP Server exposes 12 tools through the Vinkius. Connect it to Vercel AI SDK in under two minutes — no API keys to rotate, no infrastructure to provision, no vendor lock-in. Your configuration, your data, your control.

How to Connect Runn to Vercel AI SDK via MCP

Follow these steps to integrate the Runn MCP Server with Vercel AI SDK.

Install dependencies

Run npm install @ai-sdk/mcp ai @ai-sdk/openai

Replace the token

Replace [YOUR_TOKEN_HERE] with your Vinkius token

Run the script

Save to agent.ts and run with npx tsx agent.ts

Explore tools

The SDK discovers 12 tools from Runn and passes them to the LLM

Why Use Vercel AI SDK with the Runn MCP Server

Vercel AI SDK provides unique advantages when paired with Runn through the Model Context Protocol.

TypeScript-first: every MCP tool gets full type inference, IDE autocomplete, and compile-time error checking out of the box

Framework-agnostic core works with Next.js, Nuxt, SvelteKit, or any Node.js runtime. same Runn integration everywhere

Built-in streaming UI primitives let you display Runn tool results progressively in React, Svelte, or Vue components

Edge-compatible: the AI SDK runs on Vercel Edge Functions, Cloudflare Workers, and other edge runtimes for minimal latency

Runn + Vercel AI SDK Use Cases

Practical scenarios where Vercel AI SDK combined with the Runn MCP Server delivers measurable value.

AI-powered web apps: build dashboards that query Runn in real-time and stream results to the UI with zero loading states

API backends: create serverless endpoints that orchestrate Runn tools and return structured JSON responses to any frontend

Chatbots with tool use: embed Runn capabilities into conversational interfaces with streaming responses and tool call visibility

Internal tools: build admin panels where team members interact with Runn through natural language queries

Runn MCP Tools for Vercel AI SDK (12)

These 12 tools become available when you connect Runn to Vercel AI SDK via MCP:

get_person

Retrieves details for a specific person

get_project

Retrieves details for a specific project

list_actuals

Lists actual hours logged (timesheet data)

list_assignments

Lists all resource assignments across projects

list_clients

Lists all clients in the organization

list_holidays

Lists public holidays and non-working days

list_milestones

Lists milestones for a specific project

list_people

Lists all people and resources in Runn

list_phases

Lists project phases for a specific project

list_projects

Lists all projects managed in Runn

list_roles

Lists all defined roles/positions

list_teams

Lists all teams in the workspace

Example Prompts for Runn in Vercel AI SDK

Ready-to-use prompts you can give your Vercel AI SDK agent to start working with Runn immediately.

"List all active projects mapped."

"Which team is assigned to the Alpha project next week?"

"What are the upcoming milestones for the Beta project?"

Troubleshooting Runn MCP Server with Vercel AI SDK

Common issues when connecting Runn to Vercel AI SDK through the Vinkius, and how to resolve them.

createMCPClient is not a function

npm install @ai-sdk/mcpRunn + Vercel AI SDK FAQ

Common questions about integrating Runn MCP Server with Vercel AI SDK.

How does the Vercel AI SDK connect to MCP servers?

createMCPClient from @ai-sdk/mcp and pass the server URL. The SDK discovers all tools and provides typed TypeScript interfaces for each one.Can I use MCP tools in Edge Functions?

Does it support streaming tool results?

useChat and streamText that handle tool calls and display results progressively in the UI.Connect Runn with your favorite client

Step-by-step setup guides for every MCP-compatible client and framework:

Anthropic's native desktop app for Claude with built-in MCP support.

AI-first code editor with integrated LLM-powered coding assistance.

GitHub Copilot in VS Code with Agent mode and MCP support.

Purpose-built IDE for agentic AI coding workflows.

Autonomous AI coding agent that runs inside VS Code.

Anthropic's agentic CLI for terminal-first development.

Python SDK for building production-grade OpenAI agent workflows.

Google's framework for building production AI agents.

Type-safe agent development for Python with first-class MCP support.

TypeScript toolkit for building AI-powered web applications.

TypeScript-native agent framework for modern web stacks.

Python framework for orchestrating collaborative AI agent crews.

Leading Python framework for composable LLM applications.

Data-aware AI agent framework for structured and unstructured sources.

Microsoft's framework for multi-agent collaborative conversations.

Connect Runn to Vercel AI SDK

Get your token, paste the configuration, and start using 12 tools in under 2 minutes. No API key management needed.