LangSmith MCP. Debug LLM agent performance with full trace visibility

Works with every AI agent you already use

…and any MCP-compatible client

Just plug in your AI agents and start using Vinkius.

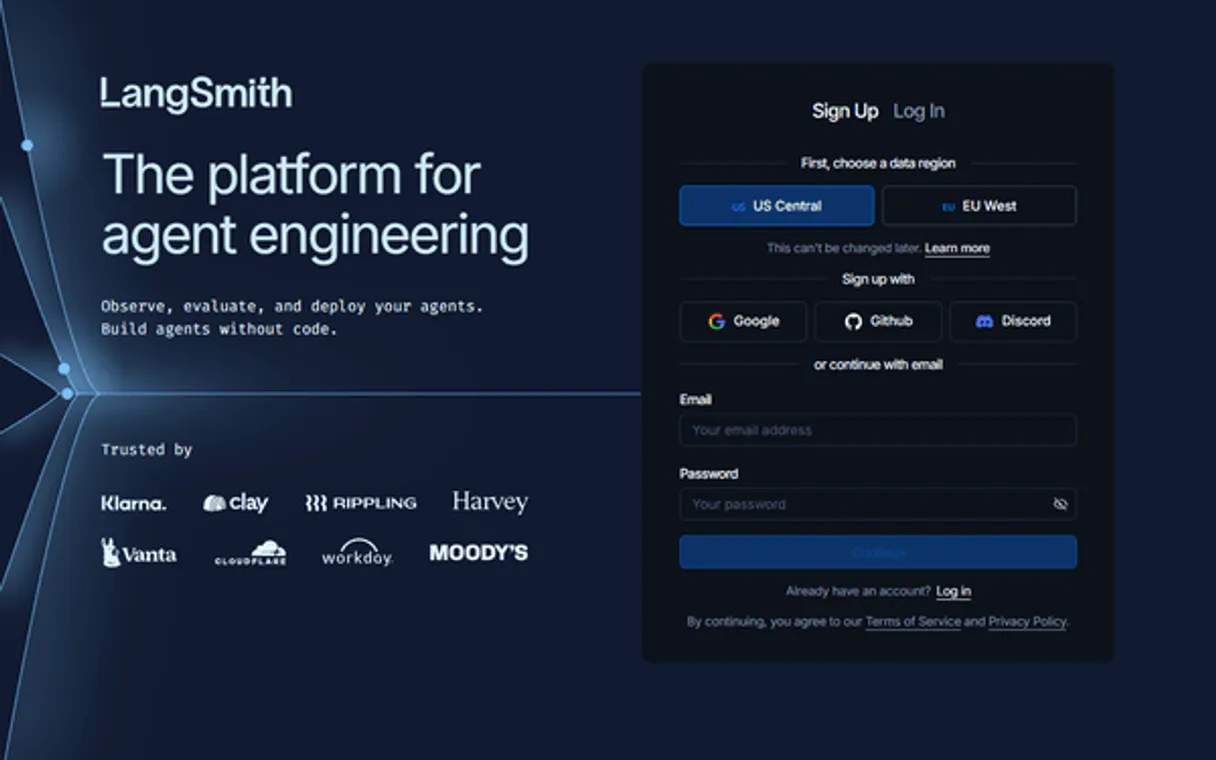

LangSmith. It's an observability platform for LLM applications. Use it to monitor traces, debug agent runs, and track performance metrics across your entire AI stack.

You get full visibility into model calls, chains, and agent actions, helping you spot regressions or latency spikes when you can't reproduce bugs manually.

What your AI agents can do

Langsmith get run

Retrieves detailed information about a specific run or trace ID for debugging LLM calls or agent actions.

Langsmith list projects

Lists all tracing projects in your account, showing aggregate metrics like total runs, median latency, and feedback counts.

Langsmith list runs

Lists recent traces in a specific project, showing run names, types, status, token usage, and timing.

List all LangSmith projects and retrieve aggregate stats like median latency and total runs.

List recent runs within a project, showing run type, status, token usage, and timing.

Get a deep dive into a single run/trace, including its full execution path, inputs, outputs, and error messages.

Ask AI about this MCP

Supported MCP Clients

Waiting for input…

LangSmith MCP Server: 3 Tools for LLM Debugging

These tools let your agent list projects, view recent runs, and deep-dive into specific traces to debug complex LLM and agent workflows.

019d75c4langsmith get run

Retrieves detailed information about a specific run or trace ID for debugging LLM calls or agent actions.

019d75c4langsmith list projects

Lists all tracing projects in your account, showing aggregate metrics like total runs, median latency, and feedback counts.

019d75c4langsmith list runs

Lists recent traces in a specific project, showing run names, types, status, token usage, and timing.

Choose How to Get Started

Build a custom MCP for your own tools, or connect a ready-made integration from our catalog.

Build Your Own

Turn any API into an MCP. Import a spec, define Agent Skills, or deploy with MCPFusion.

- Import from OpenAPI, Swagger, or YAML specs

- Create Agent Skills with progressive disclosure

- Deploy to edge with MCPFusion framework

- Built in DLP, auth, and compliance on every call

- Real time usage dashboard and cost metering

- Publish to catalog or keep private

Make Your AI Do More

Start with LangSmith, then connect any of our 4,700+ other servers whenever your AI needs more. One click, no limits.

- Use this MCP plus 4,700+ others, all in one place

- Add new capabilities to your AI anytime you want

- Every connection is secured and compliant automatically

- Track usage and costs across all your servers

- Works with Claude, ChatGPT, Cursor, and more

- New servers added to the catalog every week

What you can do with this MCP connector

This LangSmith server lets you debug LLM and agent traces right where you are. It connects your AI client to LangSmith, giving you full visibility into your model calls, chains, and agent actions. You'll spot regressions or latency spikes even when you can't reproduce bugs manually.

langsmith_list_projects lists every tracing project in your account, showing aggregate stats like total runs, median latency, and feedback counts. langsmith_list_runs lets you browse recent traces in a specific project, showing the run name, type, status, token usage, and timing. langsmith_get_run gives you a deep dive into a single run or trace ID, including its full execution path, inputs, outputs, and any error messages.

How LangSmith MCP Works

- 1 Subscribe to the LangSmith server and provide your API key.

- 2 Instruct your AI client to call

langsmith_list_projectsto scope your investigation. - 3 Use the project ID found to call

langsmith_list_runs, then use a specific run ID to calllangsmith_get_runfor debugging.

The bottom line is that you use the tools in sequence—projects, then runs, then specific traces—to build a full debugging picture.

Who Is LangSmith MCP For?

AI Engineers who need to debug production LLM agents; ML Teams tracking experiment performance; DevOps staff setting up alerts for AI workload costs and failures. If you build with LLMs and can't reproduce an error manually, this is for you.

Monitors LLM calls, chains, and agent actions in production. They use the tools to track latency and spot performance degradation after a model update.

Tracks experiment performance across multiple model versions, comparing outputs and identifying data or model regressions.

Sets up error rate alerts or monitors cost anomalies related to AI workloads, using the project metrics to justify resource changes.

What Changes When You Connect

- See performance metrics for every project.

langsmith_list_projectsgives you total runs, median latency, and feedback counts across your whole AI stack, so you don't have to guess where the bottleneck is. - Trace every action.

langsmith_list_runsshows run names, types (LLM, chain, tool), and status for recent traces. You see exactly where a failure happened—was it the model call, or the tool execution? - Deep-dive into failure states.

langsmith_get_runlets you pull up a specific run ID. You see the full execution trace, the inputs, the outputs, and the exact error message, even if the agent retried three times. - Compare model versions instantly. By listing runs, you can compare the token usage and latency of different model calls side-by-side, helping you pick the most cost-effective model.

- Track agent logic failures. If an agent fails, you don't just get an error code. You use

langsmith_get_runto see the entire chain's input and output, pinpointing whether the failure was due to bad prompt logic or an external API timeout.

Real-World Use Cases

The Agent Keeps Failing Intermittently

The agent starts failing, but the error only happens in production. Instead of guessing, you ask your agent to use langsmith_list_projects to confirm the correct project scope. Then, you use langsmith_list_runs to pull the last 10 runs. Finally, you grab the failing run ID and use langsmith_get_run to see the stack trace and the exact error message—solving the 'works on my machine' problem.

Need to Compare Model Performance

You're deciding between GPT-4 and Claude for a specific task. You ask your agent to run the same prompt through both models, logging the runs. Then, you use langsmith_list_runs to compare the latency (ms) and token usage between the two types of runs, making a data-backed decision on cost and speed.

Audit an Old, Buggy Feature

The team needs to check the performance of an agent feature from last month. You use langsmith_list_projects to find the historical project ID. You then use langsmith_list_runs to filter for successful runs from that time period, allowing you to audit performance metrics without manual database queries.

Debugging a Complex Tool Failure

A tool call keeps timing out. You use langsmith_list_runs to find the run that failed due to timeout. You feed that run ID into langsmith_get_run to see the full context: how many times the agent retried, what the inputs were, and the specific timeout error from the tool itself.

The Tradeoffs

Assuming the agent knows the ID

Just telling the agent, 'Check the run ID for the last failure.' The agent has no context and won't know which run ID you mean, leading to a generic or failed query.

→

First, ask the agent to use langsmith_list_projects to confirm the project ID. Next, use langsmith_list_runs to narrow down the results by date or type. Finally, pass the specific run ID to langsmith_get_run.

Ignoring the run context

Calling langsmith_get_run with a run ID without first checking the project scope. This might return stale or unrelated data if the ID space isn't isolated.

→

Always scope your work. Start with langsmith_list_projects to identify the correct project ID, and then use that ID when calling langsmith_list_runs.

Over-relying on high-level summaries

Just looking at a dashboard summary and not knowing why the latency spiked. You only see 'Median Latency up 20%,' but don't know which tool or model caused it.

→

Drill down immediately. Use langsmith_list_runs to see the run types and timings, and then use langsmith_get_run to find the exact step—e.g., a slow tool call or a complex chain execution—that caused the spike.

When It Fits, When It Doesn't

Use this if your core problem is understanding why an LLM agent behaved a certain way, or if you need to compare performance metrics (latency, tokens) across multiple runs or model versions. You need visibility into the internal steps of the agent, not just the final output. Don't use this if you just need to store prompt templates or manage API keys; that's handled by other services. If you only need to know the final success/fail status, simply running the agent is enough. But if the failure is intermittent, you need the deep tracing provided by langsmith_get_run to debug the underlying mechanism.

Independent Platform Disclaimer: Vinkius is an independent platform and is not affiliated with, endorsed by, sponsored by, verified by, or otherwise authorized by LangSmith. All third-party trademarks, logos, and brand names are the property of their respective owners. Their use on this website is strictly for informational purposes to identify service compatibility and interoperability.

VINKIUS INFRASTRUCTURE

Cloud Hosted

Managed infra

V8 Isolated

Sandboxed per request

Zero-Trust Proxy

No stored credentials

DLP Enforced

Policy on every call

GDPR Compliant

EU data residency

Token Compression

~60% cost reduction

Works with Claude, ChatGPT, Cursor, and more

The Model Context Protocol standardizes how applications expose capabilities to LLMs. Instead of operating in isolation, your AI gains direct access to external platforms, live data, and real-world actions through secure, standardized connections.

This server provides 3 capabilities that interface natively with Claude, ChatGPT, Cursor, and any MCP client. No middleware. No custom integration required.

Available Capabilities

Debugging LLM Agents Shouldn't Require a Ph.D. in Debugging

Today, when an agent fails, you're stuck. You get a vague error message in the terminal—'Agent failed.'—and then you start the painful process: copying the error, opening the dashboard, trying to find the right project, filtering by time, and manually piecing together what step failed. It's a mess of tabs and copy-pasting just to understand one bad run.

With this MCP server, you ask your agent to debug the failure. It uses `langsmith_list_runs` to find recent failures, then passes the ID to `langsmith_get_run`. You get the full execution trace, inputs, outputs, and the exact error right back—no manual dashboard hopping required. It's instant visibility.

LangSmith MCP Server: See the Full Agent Execution Trace

Manual debugging requires you to separately check the model call logs, the tool execution logs, and the chain orchestration status. You have to piece together the sequence: Did the prompt fail? Did the tool time out? Was the input data wrong?

Now, the agent handles it. It uses the tools to retrieve the full, correlated trace. You see the whole sequence—the initial prompt, the tool call, the tool output, and the final failure—all in one stream. It's a massive step up from fragmented logs.

Common Questions About LangSmith MCP

How do I find the total number of runs in my LangSmith projects using langsmith_list_projects? +

The langsmith_list_projects tool provides this information by displaying the 'Runs' count for each project ID. This gives you a quick, aggregated metric across all your tracing work.

What is the difference between langsmith_list_runs and langsmith_get_run? +

langsmith_list_runs shows you a list of recent runs, giving you a summary (status, type, tokens). langsmith_get_run takes a specific run ID and gives you the complete, detailed execution trace, including all inputs and outputs.

Can I use langsmith_get_run to debug a tool timeout? +

Yes. langsmith_get_run retrieves the full trace, which includes detailed error messages. If a tool timed out, the full trace will show the timeout error, how many times the agent retried, and the exact step where it failed.

Do I need a project ID to use langsmith_list_runs? +

Yes. langsmith_list_runs requires you to specify the target project ID to narrow the search. This prevents querying unrelated traces and keeps the results focused.

How do I set up the API key for langsmith_list_projects? +

You must provide your LangSmith API key during setup. This key grants your AI client access to your tracing data. The initial subscription includes 5,000 free traces per month.

Can langsmith_list_runs show me both success and error runs? +

Yes, langsmith_list_runs shows the status for every run. You'll see if a run was successful, or if it encountered an error. It also reports token usage and timing.

What information does langsmith_get_run provide about an agent's inputs and outputs? +

langsmith_get_run gives you a deep look at the specific run. You get the full execution trace, including both the inputs and the outputs for every step.

Does langsmith_list_projects count all types of runs? +

Yes, langsmith_list_projects aggregates all run types. It provides total runs, median latency, and feedback scores across all projects linked to your account.

What is LangSmith and why do I need it? +

LangSmith is the 'Datadog for LLM applications'. Without observability, AI agents in production are black boxes — you can't see what they're doing, why they fail, or how much they cost. LangSmith traces every LLM call, chain execution, and tool use, giving you complete visibility into inputs, outputs, latency, token usage, and error rates.

Does LangSmith work only with LangChain? +

No! While LangSmith is built by the LangChain team and has native LangChain/LangGraph integration, it works with any LLM application. You can trace OpenAI, Anthropic, or any LLM provider directly using the REST API. It also integrates with CrewAI, AutoGen, and other frameworks.

How much does LangSmith cost? +

LangSmith offers a generous free tier with 5,000 traces per month — no credit card required. The Developer plan is $39/month with 50,000 traces. Enterprise plans include SSO, RBAC, dedicated support, and unlimited traces with volume discounts.

Use it with your favorite AI tools

Connect this server to Cursor, Claude, VS Code, and more.

More in this category

Apify

Command Apify scrapers from your AI agent — run actors, extract web data, poll datasets, and automate browser tasks seamlessly.

Arize AI

Monitor ML model performance, detect data drift, and troubleshoot prediction quality with real-time observability dashboards.

Tray.io

Equip your AI agent to orchestrate automations, track active workflows, and monitor data execution flows across Tray.io natively.

You might also like

LendAPI

Manage loan applications, borrower profiles, and credit decisioning via the LendAPI REST API.

Cron Expression Calculator

Calculate the exact future dates of any Cron expression using deterministic JavaScript. Stop LLMs from failing date mathematics and leap year edge cases.

Appcues

Manage your Appcues flows, segments, and user experiences with AI — track activity and publish content effortlessly.